Intro

For years, serious SEOs relied on a simple Google parameter to understand what was happening beyond page one: &num=100.

It wasn’t a hack. It didn’t manipulate rankings. It simply exposed more of the search results that already existed.

For anyone doing real SEO—content creation, link building, technical fixes, local campaigns, or international SEO—seeing the full Top 100 was the difference between actively steering rankings and guessing after the fact.

Then search results evolved. Rank tracking tools adapted. And quietly, full Top-100 visibility disappeared from most platforms.

Not because Google stopped ranking beyond page one—but because how that data could be accessed changed.

Today, the principle behind &num=100 is back where it belongs: at the centre of serious rank tracking.

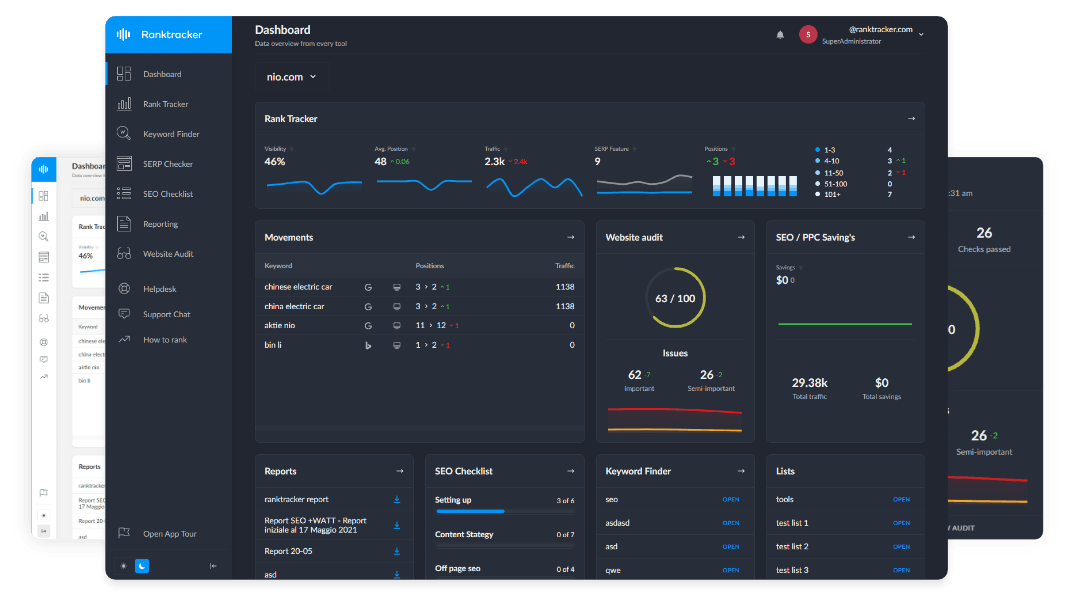

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

This article explains what changed, why it changed, and why full Top-100 tracking now requires a different approach.

What &num=100 Actually Means (and Why SEOs Still Care)

In simple terms:

-

num=100tells Google Search to return up to 100 results instead of the default 10 -

&num=100is the same parameter, used when a URL already contains other parameters

For a long time, this wasn’t just something individual SEOs used manually.

Most rank tracking tools relied on this same mechanism — or variations of it — to collect deeper SERP data efficiently. A single request could expose a large portion of the ranked results without needing to paginate through multiple pages.

SEOs care because Google doesn’t stop ranking at page one.

Your competitors don’t stop at page one. Your progress rarely starts on page one.

When you can see positions 21–100, you can identify:

-

pages gaining momentum before they break through

-

competitors climbing before they take traffic

-

whether links and content are actually working

-

where rankings stall—and why

If you only see Top 10 or Top 20 data, you’re not observing SEO progress.

You’re seeing the outcome after the work has already paid off.

Why Google Search Results Aren’t “100 Blue Links” Anymore

Modern SERPs are dynamic by design.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Depending on query intent, location, and device, Google now blends multiple result types into a single page, including:

-

AI Overviews (AI-generated summaries shown above organic results)

-

featured snippets

-

People Also Ask

-

local packs

-

images and videos

-

shopping results

-

knowledge panels

-

discussion-style modules

AI Overviews in particular have changed how visibility works.

They sit above the traditional organic listings and summarise information pulled from pages Google already ranks — often sourcing content from URLs that are not in the Top 10.

This matters because “position” is no longer a simple, static list.

SERP features now interrupt organic listings, shift ordering, and influence what users see before they ever scroll. A page ranking 25th can still contribute to perceived visibility, while a page ranking 7th may be pushed far down the screen.

The Top 100 still exists — but capturing it accurately now requires more than a single “return 100 results” request. It requires understanding how rankings, features, and AI layers interact across the full SERP.

Why Google Stepped Away From Easy Top-100 Access

Google didn’t remove the Top 100.

What it removed was the easy way of pulling it.

For a long time, &num=100 made it possible to retrieve a complete, ordered SERP in a single request. That was convenient. One call, and you could see how Google ranked an entire topic from top to bottom.

SEOs used it. Rank trackers built on it. And over time, automated systems increasingly relied on it too.

As search results became more valuable as structured data, that convenience started to matter in a different way.

A full Top-100 SERP isn’t just a list of links. At scale, it reveals:

-

how Google evaluates relevance

-

how authority is distributed across a topic

-

how intent is interpreted

-

which sources are trusted more than others

-

how information is clustered and framed

That structure is extremely valuable. Not just for SEO analysis and market monitoring, but also for training and evaluating large language models.

This shift isn’t theoretical.

Google has publicly taken legal action against large-scale SERP data providers — most notably SerpAPI — over the automated collection and redistribution of search results.

In response to Google’s claims, SerpAPI published a public statement titled:

“Google v. SerpAPI: We’re filing a Motion to Dismiss. Here’s why we’re in the right.”

Google's complaint invokes the Digital Millennium Copyright Act to try to stop SerpApi from accessing its website.

Those cases make one thing clear: Google is no longer comfortable with search results being treated as a low-cost, high-volume data feed.

From Google’s perspective, this isn’t about hiding rankings.

It’s about controlling the economics of extraction.

Single-request Top-100 access made it trivial to collect ordered relevance data at massive scale, without pagination, scrolling, or anything resembling normal user behaviour. That lowered the barrier not just for SEO tools, but for any system looking to learn from Google’s ranking decisions.

Rather than blocking access outright, Google changed how search results are delivered:

-

fixed result lists gave way to pagination and continuous scrolling

-

results became more dynamically loaded

-

SERP features were interleaved more aggressively

-

rendering became increasingly dependent on context like device, location, and intent

There was no announcement. Nothing broke overnight.

But the outcome was deliberate:

The Top 100 still exists — it just isn’t available cheaply in one request anymore.

You can still scroll. You can still click through pages. SEO tools can still crawl the SERP page by page.

What became expensive was bulk, frictionless harvesting — especially harvesting useful for large-scale modelling and LLM training.

That distinction matters.

Google didn’t remove depth. It added friction.

And once that shortcut disappeared, many rank trackers quietly changed behaviour — not because the rankings stopped existing, but because collecting them properly now requires far more work, infrastructure, and cost.

77% of Sites Lost Keyword Visibility After Google Removed num=100: Data

The removal of the num=100 parameter triggered sharp drops in Google Search Console impressions, rankings, and keyword visibility across a wide range of websites.

Google’s change to how search results are delivered has significantly reshaped SEO datasets. According to a new analysis of 319 websites conducted by Tyler Gargula, Director of Technical SEO at LOCOMOTIVE Agency, the majority of sites experienced measurable losses in reported visibility once num=100 stopped functioning reliably.

By the numbers

-

Impressions: 87.7% of sites saw a decline in Google Search Console impressions

-

Query count: 77.6% of sites lost unique ranking terms

-

Keyword length: Short-tail and mid-tail keywords were most affected

-

Rank positions:

-

Fewer queries now appear on page 3 and beyond

-

More queries surface in the Top 3 and on page 1

-

The shift suggests that rankings reported today more closely reflect actual user-visible positions, without artificial inflation caused by one-shot Top-100 retrieval.

In other words, the disappearance of num=100 didn’t remove rankings — it removed a reporting shortcut that had long influenced how visibility was measured.

Why This Quietly Broke Most Rank Trackers

Most rank trackers were not built to crawl:

- ten SERP pages

- per keyword

- per location

- per device

- every day

They were built to be fast, lightweight, and inexpensive.

When one-shot Top-100 retrieval stopped being reliable, many tools adapted by:

- limiting tracking depth

- refreshing deeper rankings weekly

- compressing daily data into weekly snapshots

- charging extra credits for depth

- stopping once the tracked domain was found

Not because the data disappeared—but because retrieving it properly became costly.

That’s why “Top 100 tracking” still appears in marketing copy, but behaves very differently in practice.

Why Page-by-Page Crawling Is the Only Reliable Method

Accurate Top-100 tracking means capturing the SERP as it actually exists:

- page 1 → positions 1–10

- page 2 → positions 11–20

- page 3 → positions 21–30

…

- page 10 → positions 91–100

That’s ten SERP pages per keyword, every day.

It requires more infrastructure. More processing. More cost.

But it avoids the common failure modes:

- missing results from dynamic loading

- incorrect positioning around SERP features

- skipped sections of the SERP

- estimated positions instead of verified ones

If a tracker isn’t crawling page by page, it’s almost always sampling, smoothing, estimating, or delaying.

How Ranktracker Tracks the Full Top 100 Daily (Including Every SERP Feature)

Ranktracker doesn’t rely on a single request that claims to return “the Top 100.”

It builds Top-100 tracking the way Google actually delivers search results today: page by page, feature by feature.

For every tracked keyword, Ranktracker:

-

crawls SERP pages 1 through 10 sequentially

-

captures every SERP feature that appears on the page

-

records AI Overviews when present, including their placement and frequency

-

detects featured snippets, People Also Ask, local packs, images, videos, shopping blocks, knowledge panels, and discussion-style modules

-

separates organic listings from SERP features instead of blending them together

-

normalises results by device, location, and language

-

assigns positions based on real page order, not assumptions

-

stores full daily history with no compression or weekly rollups

-

repeats the process every day, by default

This means Ranktracker doesn’t just tell you where a page ranks.

It shows:

-

whether an AI Overview exists for the query

-

which SERP features are pushing organic results down

-

how visibility changes when features appear or disappear

-

which competitors are gaining exposure without ranking top 10

-

how rankings and SERP composition evolve together

That’s how you get:

-

real movement between positions 21–100

-

full competitor visibility beyond page two

-

clarity when SERP features—not rankings—cause traffic shifts

-

reporting that doesn’t “jump” because deeper data refreshed late

What you see is what Google actually shows — rankings, features, and AI layers included, every day.

What Other Rank Trackers Really Do (Depth vs Reality)

“Top 100 tracking” is one of the most misused phrases in SEO software.

Page-One-Only Trackers (Top 10)

Top 20 Trackers

- Moz Pro

- Raven Tools

- RankWatch

- RankTracking.co

- Marketing Miner

- Localo

- Morningscore

- SEO Tester Online (weekly)

- TinyRanker

Top 30 Trackers

- Topvisor

- DragonMetrics

- Rankinity

- RankMonitor

- Wincher (Top 100 removed)

- Mangools SERPWatcher (partial depth)

- Similarweb (Refresh Every 72 Hours)

Top 50 trackers

“Top 100” — But Not Daily

- SEOmonitor (1–20 daily, deeper weekly)

- Mangools (1–30 daily, deeper weekly)

- AgencyAnalytics (weekly)

- SpyFu (weekly)

- TrueRanker (weekly)

- Zutrix (weekly)

- Ubersuggest (weekly)

- Semrush (daily initially, then weekly snapshots)

- Ahrefs (weekly & unreliable)

Depth Exists, But at a Cost

- WebCEO (higher pricing)

- AWR / Advanced Web Ranking (double credits)

- DataForSEO (expensive daily depth)

- SEOptimer (on-demand only)

Hidden Blind Spots

- Nightwatch (stops once your site is found)

- SEO PowerSuite (ignores below position 30)

- Senuto (TOP3 / TOP10 / TOP50 only)

- Serpstat (not truly local)

Data accurate as of 23 February 2026. Rank-tracking depth, refresh frequency, and plan limitations vary by provider and may change over time. This assessment reflects our findings based on hands-on testing, documentation review, and current public information at the time of research.

The Ranktracker Relaunch: Full Top-100 Tracking, Rebuilt From the Ground Up

The return of full Top-100 tracking isn’t an isolated feature update. It’s part of a complete rebuild of Ranktracker.

Over the past year, the platform has been re-engineered to handle modern SERPs properly — not just page-one rankings, but the full depth of Google search as it exists today.

That relaunch includes:

-

full daily Top-100 tracking by default, with no depth limits

-

page-by-page SERP crawling instead of shortcut requests

-

complete collection of all SERP features, including AI Overviews

-

true local, language, and device-level normalisation

-

full daily history with no weekly compression

-

infrastructure designed to scale deep tracking reliably, not selectively

This matters because most rank trackers didn’t “lose” Top-100 tracking by accident. They backed away from it when shortcuts disappeared and costs increased.

Ranktracker went the other direction.

Instead of reducing depth or charging extra for it, the platform was rebuilt so full SERP visibility is the baseline, not an add-on.

A Thank-You for Early Users

Because this relaunch has been done quietly, without a hard launch announcement, Ranktracker is offering a limited thank-you to early users and returning teams.

Right now:

-

50% off your first month on any monthly plan

-

Discount code: **num=100

-

Applies to full access, including daily Top-100 tracking and all core features

👉 Claim your 50% discount and start tracking

There’s no special setup required. Top-100 tracking is already enabled by default.

The offer exists for one reason: to let people see what full SERP visibility actually looks like in practice — not in marketing claims, but in daily use.

Why Positions 21–100 Matter in Practice

SEO progress usually looks like this:

92 → 68 68 → 44 44 → 27 27 → 16 16 → 9 9 → 4

If you can’t see the early stages, you can’t:

- forecast outcomes

- prove impact

- respond to threats early

Top-100 visibility isn’t a vanity metric. It’s how cause and effect in SEO becomes visible.

The Practical Takeaway

Google didn’t remove the Top 100. It removed the shortcut.

Rankings beyond page one still exist. They’re still ordered. They still move every day. What changed is that seeing them accurately now requires real work.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

If your rank tracker:

-

stops at the Top 10, 20, 30, or 50

-

refreshes deeper rankings weekly instead of daily

-

compresses daily movement into weekly snapshots

-

charges extra just to see beyond page two

-

or never crawls the full SERP page by page

then you’re not looking at the full search results. You’re looking at a simplified version of them.

Ranktracker tracks the full Top 100 every day by default, crawling page by page, capturing all SERP features and AI layers, and assigning positions based on what Google actually shows — not estimates, samples, or delayed refreshes.

That’s what &num=100 represents today.

Not a URL parameter. Not a trick. A standard for seeing the real SERP.

FAQ: Top-100 Rankings, SERP Depth, AI, and Modern Rank Tracking

**What does &num=100 do in Google Search?

It instructs Google to return up to 100 ranked results instead of the default 10. It does not affect ranking order or relevance; it only changes how many results are displayed at once.

**Is num=100 the same as &num=100?

Yes. num=100 sets the result count. &num=100 is simply the same parameter appended to a URL that already contains other query parameters.

Did Google ever stop ranking beyond page one?

No. Google has always ranked far beyond page one. Page one is a display choice, not a ranking limit.

**Did Google remove num=100?

No. Google changed how SERPs are delivered, not whether rankings exist. Rankings beyond page one still exist, but they are no longer reliably retrievable in a single request.

**Why did num=100 become unreliable over time?

Because Google moved away from static, paginated SERPs toward dynamic rendering, continuous loading, and context-dependent result delivery.

Why did Google make Top-100 retrieval harder?

Because one-request SERP retrieval enabled large-scale scraping, competitive intelligence harvesting, and dataset creation at extremely low cost.

How is this related to AI and LLM training?

Search results are high-value, structured relevance data. Easy Top-100 access allowed LLMs and data brokers to harvest ranking order, authority signals, and SERP composition at scale.

Did OpenAI and other companies train models on search data?

Public reporting confirms that large language models, including early OpenAI systems, were trained on mixtures of licensed data, human-created data, and publicly available web data — including search-accessible content.

Why does Google care about this now?

Because search results increasingly feed downstream systems: AI models, analytics platforms, monitoring tools, and competitive intelligence engines. That makes SERPs strategically sensitive data.

Is Google actively fighting SERP scraping?

Yes. Google has increased technical barriers, changed rendering methods, and is pursuing legal action against large-scale scraping providers, including lawsuits involving SERP APIs.

Why is Google suing SERP API providers?

Because they enable systematic extraction of Google’s search results at scale, bypassing intended usage patterns and commercial controls.

Does this mean Google is blocking SEO tools?

No. Google allows crawling consistent with realistic usage patterns. What it discourages is cheap, bulk, shortcut extraction.

Can humans still browse to page 10?

Yes. Page-by-page browsing still works. The friction applies to automation at scale, not individual users.

Can SEO tools still track the Top 100?

Yes — but only by crawling page by page, rendering dynamically, and handling SERP features correctly.

Why did many rank trackers stop showing the full Top 100?

Because once shortcuts disappeared, accurate Top-100 tracking became significantly more expensive to operate.

What makes Top-100 tracking expensive today?

It requires:

- 10 SERP pages per keyword

- dynamic rendering

- feature detection

- geo and device normalisation

- daily repetition

- large data storage

Why do some tools still claim “Top-100 tracking”?

Because the term is loosely defined in marketing. Many tools only partially retrieve the Top 100 or refresh it infrequently.

What does “weekly Top-100 refresh” actually mean?

It means positions beyond a shallow range (often Top 20 or Top 30) are only checked once per week.

Why do rankings look flat and then suddenly jump?

Because deeper positions were not refreshed daily. The jump reflects a data update, not a Google algorithm change.

Why do some tools stop crawling once a site is found?

To reduce cost. This prevents full competitor visibility and does not retrieve the complete SERP.

Why does depth matter if AI Overviews exist?

Because AI Overviews pull from pages Google already ranks — including pages well outside the Top 10.

Can pages ranking 30–60 influence AI Overviews?

Yes. Ranking depth does not equal visibility depth in AI-augmented SERPs.

Does AI replace organic rankings?

No. AI Overviews sit on top of rankings. They summarise ranked content; they do not replace ranking order.

Why does AI make deeper tracking more important?

Because influence can appear before traffic. Pages can shape AI output long before they reach page one.

If I only track Top 10, what am I missing?

Early momentum, competitive movement, content validation signals, and AI-driven visibility shifts.

Why do some tools compress daily history into weekly data?

To reduce storage and processing costs.

Why is compressed history a problem?

It removes day-to-day causality. You lose the ability to tie changes to specific actions.

Is Top-100 tracking useful for local SEO?

Yes. Local results fluctuate heavily, and progress often starts deep before breaking into packs or page one.

Is Top-100 tracking useful for international SEO?

Yes. International SERPs move slower and deeper. Early signals almost always appear beyond page one.

Is Top-100 tracking useful for link building?

Yes. Link impact often shows first in positions 40–80 before climbing further.

Does Google personalise the Top 100?

Yes. Device, location, language, and intent can all change SERP composition.

Why must SERPs be normalised by device and location?

Because mobile, desktop, and local SERPs can differ materially in structure and ordering.

Why is page-by-page crawling the only reliable method?

Because it mirrors how Google actually serves results to users.

Why don’t all rank trackers do this?

Because it costs more — in infrastructure, compute, proxies, and storage.

Does Ranktracker do this for every keyword by default?

Yes. Full Top-100 crawling, including SERP features and AI Overviews, is performed daily by default.

Is Top-100 tracking a legacy concept?

No. The parameter is legacy. The need for depth is more relevant than ever.

**What does &num=100 represent today?

Not a URL trick — but a benchmark for complete SERP visibility.