Intro

LLMs don’t just “read” content the way humans do. They break it into semantic fragments — chunks that models can:

-

embed

-

classify

-

retrieve

-

rank

-

understand

-

cite

Among all content formats, three structures consistently outperform everything else for AI interpretation:

-

✔ FAQs

-

✔ lists

-

✔ tables

These formats generate high-resolution embeddings, clean semantic boundaries, and machine-friendly patterns that LLMs use as reference points.

But most websites implement them incorrectly — costing them visibility in:

-

Google AI Overviews

-

ChatGPT Search

-

Perplexity

-

Gemini

-

Copilot

-

RAG-driven enterprise systems

This guide explains exactly how to optimize FAQs, lists, and tables so LLMs can learn from them effectively — without sacrificing human readability.

1. Why These Formats Matter So Much to LLMs

LLMs rely on predictable structure to interpret and retrieve meaning.

FAQs, lists, and tables are powerful because they:

-

✔ isolate concepts

-

✔ reduce semantic noise

-

✔ define boundaries clearly

-

✔ produce small, crisp embeddings

-

✔ align with retrieval patterns

-

✔ surface answers directly

-

✔ map cleanly to knowledge graphs

These formats tend to dominate generative answer citations because they are:

-

concise

-

structured

-

explicit

-

extractable

-

unambiguous

If your site isn’t using them correctly, you lose a massive opportunity to feed AI systems dependable, trustworthy signals.

2. How LLMs Parse FAQs, Lists, and Tables (Technical Breakdown)

FAQs

LLMs treat each Q&A pair as a micro-document. This improves:

-

embedding accuracy

-

classification

-

retrieval ranking

-

direct answer extraction

Lists

Each bullet is chunked as a separate semantic unit. LLMs treat list items as:

-

facts

-

attributes

-

steps

-

components

-

definitions

Lists produce highly retrievable micro-embeddings.

Tables

Tables create structured data relationships. These can:

-

map entities

-

compare attributes

-

define categories

BUT — tables also create multiple embedding challenges if not formatted cleanly.

You must structure them deliberately for LLM interpretation.

3. Optimizing FAQs for LLM Learning

FAQs are the single most valuable format for LLM indexing.

Here’s how to perfect them.

Rule 1 — One Question = One Concept

Avoid compound questions like:

“What is AIO, and how does it work, and why does it matter?”

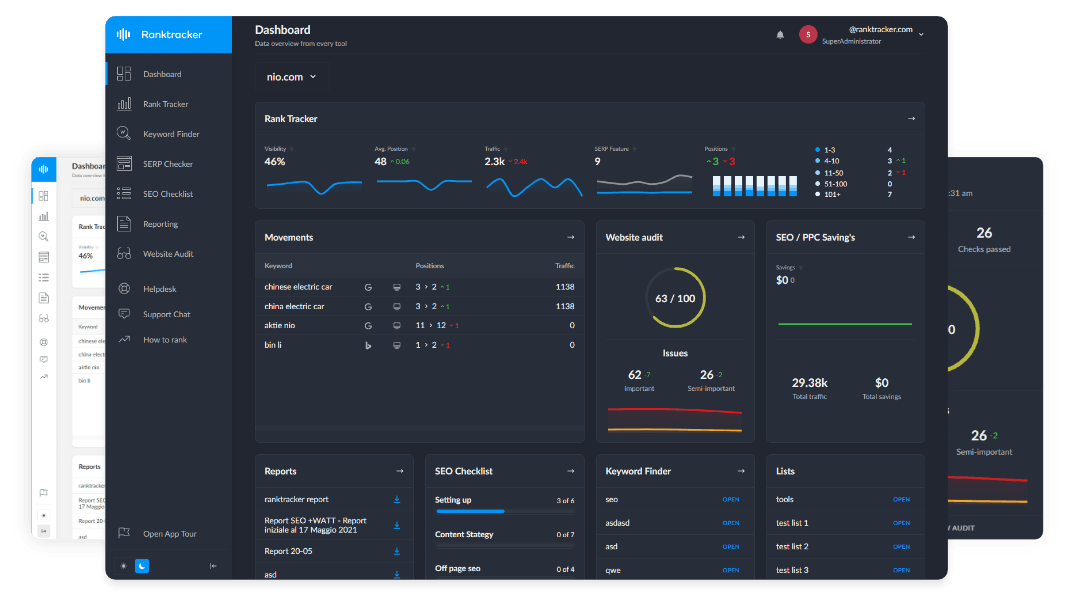

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

LLMs cannot cleanly embed mixed concepts.

Use:

“What is AIO?” followed by “How does AIO work?” followed by “Why is AIO important in 2025?”

Rule 2 — Use Literal, Question-Style Formatting

LLMs prefer:

-

“What is…”

-

“How does…”

-

“Why does…”

-

“Where can…”

-

“When should…”

Avoid rhetorical or stylized questions.

Rule 3 — The Answer Must Begin With the Answer

Correct:

“AIO is the practice of structuring content so that large language models can interpret, embed, and cite it accurately.”

Incorrect:

“There are many approaches to AI search, but before we get to that…”

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Always answer immediately.

Rule 4 — Keep Answers 2–4 Sentences

LLMs retrieve Q&A pairs as compact blocks.

Short = clean. Long = noisy.

Rule 5 — Reinforce Entities Explicitly

Include stable entity names:

“Ranktracker’s Web Audit helps ensure your content is machine-readable.”

This improves entity anchoring.

Rule 6 — Use FAQPage Schema

This is critical.

LLMs heavily weight JSON-LD schema for FAQ classification.

Rule 7 — Place High-Value FAQs on Category Pages

LLMs often lift FAQs from:

-

service pages

-

category hubs

-

homepages

Not just blog posts.

4. Optimizing Lists for LLM Learning

Lists are LLM favorites — but you must format them correctly.

Rule 1 — Use Lists for Distinct, Non-Overlapping Concepts

LLMs assume each bullet = one semantic unit.

Never mix:

-

benefits + features

-

examples + definitions

-

pros + steps

Use separate lists instead.

Rule 2 — Start List Items With the Concept Itself

Example:

“Semantic clarity — LLMs need precise meaning to embed text accurately.”

Avoid:

“Because LLMs prefer semantic clarity, you should…” — too long, mixed.

Starting with the concept increases classification precision.

Rule 3 — Keep Bullets Short

Ideal length:

-

1 line = best

-

2 lines = acceptable

-

3+ lines = embedding noise

Rule 4 — Use Parallel Structure

Every bullet should follow the same pattern.

This creates structural consistency the model can learn from.

Rule 5 — Use Lists Frequently

Use lists for:

-

steps

-

benefits

-

definitions

-

mistakes

-

symptoms

-

components

-

attributes

-

frameworks

LLMs prefer lists over paragraphs for almost every concept.

5. Optimizing Tables for LLM Learning

Tables are the most misunderstood structure — they can be incredibly useful or extremely harmful depending on formatting.

Why Tables Are Hard for LLMs

Tables often contain:

-

multi-cell meaning

-

uneven semantic density

-

merged cells

-

nested concepts

-

ambiguous headers

-

non-parallel rows

This leads to embedding fragmentation.

How to Make Tables LLM-Friendly

Rule 1 — Use Simple, Unmerged Cells Only

Merged cells confuse embedding boundaries.

Never merge.

Rule 2 — Ensure Every Row Represents One Entity or Concept

Each row must be self-contained.

Example:

Correct:

| Feature | Ranktracker | Competitor X |

Incorrect:

| Tool Features | Ranktracker (mobile / desktop / enterprise) |

Mixed meaning = embedding chaos.

Rule 3 — Keep Header Labels Literal and Short

Good headers:

-

Feature

-

Price

-

Region

-

Keyword Volume

Bad headers:

-

“What You Get in This Plan…”

-

“Comparison of All Core Tools Across Multiple Dimensions”

Headers must be machine-readable.

Rule 4 — Prefer Narrow Tables

3–4 columns max.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Wide tables dilute meaning and degrade embeddings.

Rule 5 — Always Follow a Table With a Summary Paragraph

This gives the model:

-

structured data

-

then a natural language explanation

The summary reinforces the table’s meaning.

Rule 6 — Use Tables for the Right Use Cases

Optimal for:

-

comparisons

-

pricing

-

data

-

features

-

metrics

Not ideal for:

-

explanations

-

definitions

-

processes

6. The Combined Structure: FAQ + Lists + Tables = Maximum AI Visibility

When used together, these formats create:

-

✔ multiple embedding types

-

✔ stable repetition patterns

-

✔ hierarchical clarity

-

✔ strong entity reinforcement

-

✔ extractable meaning blocks

-

✔ high citation probability

This is the structure AI models prefer to learn from and reference.

7. How Ranktracker Tools Support These Formats (Functional Mapping)

AI Article Writer

Produces LLM-friendly FAQs and lists automatically — you refine them for authenticity.

Web Audit

Flags:

-

missing FAQ schema

-

large, unchunked text blocks

-

structural issues affecting LLM readability

-

broken tables (HTML errors)

Keyword Finder

Identifies question-based topics ideal for FAQ content and lists.

Final Thought:

Structured Meaning Wins in the LLM Era

FAQs, lists, and tables aren’t formatting choices — they’re semantic infrastructure.

They determine:

-

how cleanly your content embeds

-

how accurately it retrieves

-

how confidently LLMs cite it

-

how consistently you appear in AI summaries

-

how your brand enters the global knowledge graph

Use these formats deliberately and you become machine-legible. Combine them with human insights and you become authoritative.

That’s the new standard of content in 2025 and beyond.