Intro

AI systems are now the world’s biggest publishers.

ChatGPT, Google Gemini, Bing Copilot, Perplexity, Claude, and Apple Intelligence answer billions of queries every day — summarizing, evaluating, and recommending brands without requiring users to click any website at all.

That means your reputation increasingly depends on how AI describes you, not how you describe yourself.

But here’s the problem:

LLMs hallucinate. LLMs misinterpret. LLMs inherit bias from their training data. LLMs often describe brands incorrectly. LLMs may confuse similar companies. LLMs may pick competitors instead of you.

This creates a new discipline marketers must master:

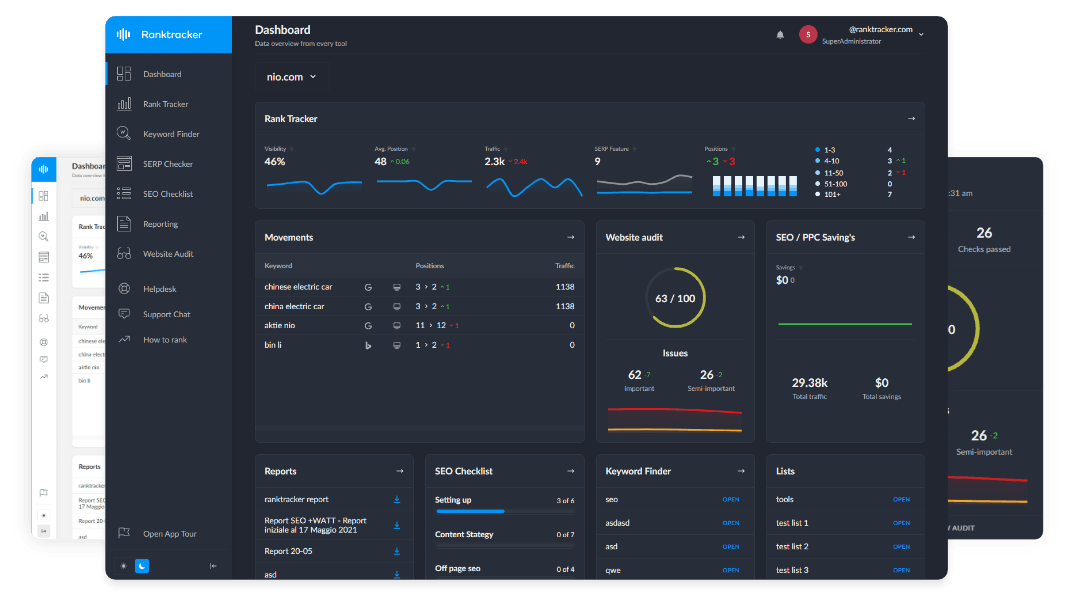

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Preventing bias and misrepresentation in AI-generated answers. It’s no longer optional — it’s survival.

This article explains why misrepresentation happens, how LLMs develop bias, and the actionable steps every brand must take to ensure AI describes them accurately, consistently, and fairly.

1. Why LLMs Produce Biased or Incorrect Brand Answers

AI misrepresentation isn’t random. It comes from identifiable patterns in model behavior.

Below are the seven root causes.

1. Incomplete or Noisy Training Data

If your brand has:

✔ inconsistent descriptions

✔ outdated information

✔ conflicting details

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ low external consensus

…LLMs fill the gaps with guesses.

Bad inputs → bad outputs.

2. Semantic Drift (Entity Confusion)

If your brand resembles:

✔ a competitor

✔ a generic term

✔ a common phrase

✔ a category label

LLMs merge entities or misattribute facts.

Example: “Rank Tracker” products vs. Ranktracker (the brand).

3. Overrepresented Competitors

If your competitors have:

✔ more backlinks

✔ stronger entity footprint

✔ more structured data

✔ better documentation

✔ clearer positioning

LLMs treat them as the authoritative reference point.

You become the “secondary” or “generic” option.

4. Weak or Missing Structured Data

Without Schema and Wikidata:

✔ AI can’t verify your facts

✔ entity relationships remain unclear

✔ model confidence drops

✔ hallucinations increase

AI relies heavily on structured facts to prevent errors.

5. Outdated Brand Content Across the Web

LLMs ingest everything:

-

old reviews

-

old pricing

-

outdated features

-

legacy pages

-

past acquisitions

-

discontinued tools

If you don’t clean your footprint, AI models will treat outdated information as truth.

6. Low Authoritativeness / E-E-A-T Weakness

Models trust:

✔ stable domains

✔ expert authors

✔ consistent entities

✔ high-authority backlinks

Bias occurs when your brand doesn’t meet AI trust thresholds.

7. Lack of Direct Engagement With AI Platforms

Most brands don’t:

✔ submit corrections

✔ update model answers

✔ maintain AI-friendly data feeds

✔ patch inconsistencies

✔ file hallucination reports

AI companies reward proactive brands.

2. The Types of AI Misrepresentation You Must Prevent

AI misrepresentation isn’t always obvious. It often occurs in subtle, damaging forms.

1. Factual Errors

Incorrect:

-

features

-

pricing

-

company size

-

product categories

-

capabilities

-

founder details

-

target audience

2. Competitor Bias

Models may:

-

recommend your competitor first

-

prioritize their features

-

downplay your strengths

-

miscategorize your product

-

confuse your name

Loss of AI positioning = loss of market share.

3. Feature Invention (Hallucination)

LLMs may:

-

assign features you don’t have

-

claim integrations you never built

-

list tools you don’t offer

This creates legal risk.

4. Category Misalignment

AI may label you incorrectly, e.g.:

-

Ranktracker → analytics tool

-

SaaS → agency

-

CRM → email platform

-

cybersecurity → marketing

Category determines visibility in AI answers.

5. Sentiment Distortion

AI may:

-

emphasize negative reviews

-

over-weight outdated criticism

-

misrepresent user satisfaction

This affects recommendation likelihood.

6. Identity Fragmentation

The model treats your brand as multiple entities due to:

-

name variations

-

old domains

-

inconsistent brand descriptions

-

conflicting Schema

This weakens entity authority.

3. How to Prevent Bias and Misrepresentation (Brand Safety Framework B-10)

Here is the 10-pillar framework to stabilize your brand identity inside LLMs.

Pillar 1 — Establish a Canonical Brand Definition

Create one machine-preferred sentence that defines you.

Example:

“Ranktracker is an all-in-one SEO platform offering rank tracking, keyword research, SERP analysis, website audits, and backlink tools.”

Use it consistently:

✔ homepage

✔ About page

✔ Schema

✔ Wikidata

✔ PR

✔ directories

✔ author bios

Consistency reduces hallucinations.

Pillar 2 — Build Strong Structured Data

Use schema types:

✔ Organization

✔ Product

✔ SoftwareApplication

✔ FAQPage

✔ HowTo

✔ Review

✔ Person (for authors)

Structured data makes your brand unambiguous to LLMs.

Pillar 3 — Strengthen Wikidata (The #1 LLM Source)

Wikidata feeds:

✔ Bing

✔ Perplexity

✔ ChatGPT

✔ RAG pipelines

✔ knowledge graphs

Update:

-

company description

-

product relationships

-

categories

-

external IDs

-

founders

-

aliases

Wikidata accuracy = AI accuracy.

Pillar 4 — Fix Entity Fragmentation

Consolidate:

✔ old brand names

✔ alternate spellings

✔ subdomain variants

✔ redirects

✔ previous corporate identities

LLMs treat inconsistencies as separate entities.

Pillar 5 — Clean Up Your External Footprint

Audit:

-

old business listings

-

outdated SaaS comparisons

-

legacy PR

-

orphaned review sites

-

scraped data

-

abandoned directories

LLMs ingest everything — including misinformation.

Pillar 6 — Publish Factual, Machine-Readable Content

AI prefers:

✔ short factual summaries

✔ Q&A blocks

✔ step-by-step sections

✔ definitions

✔ lists

✔ tables (if exported as HTML)

Clarity reduces hallucination.

Pillar 7 — Build Authoritativeness Through Links

Backlinks create:

✔ entity stability

✔ category relevance

✔ external consensus

Use:

-

Ranktracker Backlink Checker

-

Backlink Monitor

Backlinks aren’t just SEO signals — they’re AI trust signals.

Pillar 8 — Monitor AI Answers Regularly

Check:

✔ ChatGPT

✔ Gemini

✔ Copilot

✔ Claude

✔ Perplexity

Look for:

-

inaccuracies

-

hallucinations

-

competitor bias

-

sentiment issues

-

outdated facts

Pillar 9 — Submit Model Corrections

All major platforms now support corrections:

✔ OpenAI “Model Correction” Forms

✔ Google AI Overview Feedback

✔ Microsoft Copilot Correction Portal

✔ Perplexity Source Correction

✔ Meta LLaMA Enterprise Feedback

Corrections are essential for maintaining factual stability.

Pillar 10 — Maintain Recency and Update Signals

AI engines interpret:

✔ changelogs

✔ updated dates

✔ new feature announcements

✔ recent blog posts

✔ press releases

…as trust markers.

Stay fresh → stay accurate.

4. Preventing Bias in LLM Answers: Advanced Techniques

For brands with high search/AI exposure:

1. Publish Neutral, Factual Pages for RAG Ingestion

LLMs prefer fact blocks over marketing copy.

2. Maintain Clarity in Category Positioning

Repeat your category consistently (e.g., “all-in-one SEO platform”).

3. Strengthen Brand Relationships in Knowledge Graphs

Use schema relations:

sameAs

knowsAbout

subjectOf

brand

mainEntity

4. Produce Multi-Format Evidence for LLMs

LLMs trust:

✔ PDFs

✔ documentation

✔ FAQs

✔ long-form guides

✔ structured tables

because they reduce interpretive ambiguity.

5. Use High-Authority References

Cite:

-

official data

-

industry reports

-

academic research

-

standardized definitions

This positions your content as “safe to summarize.”

5. How Ranktracker Helps Prevent AI Misrepresentation

Ranktracker plays a crucial role in securing your AI identity.

Web Audit

Finds structural issues that distort machine interpretation.

Keyword Finder

Builds semantic clusters that reinforce entity clarity.

Backlink Checker & Monitor

Strengthens external consensus and reduces competitor bias.

SERP Checker

Reveals category placement and competitor adjacency.

AI Article Writer

Generates structured, factual, LLM-friendly content that reduces hallucination risk.

Ranktracker becomes the engine of factual clarity, ensuring AI models describe your brand accurately and consistently.

Final Thought:

Bias Prevention Is Now Part of Brand Safety**

In 2025, preventing bias and misrepresentation in AI answers is not a “nice-to-have.” It’s brand protection. It’s reputation management. It’s category positioning. It’s revenue.

AI models are rewriting how brands are understood. Your job is to make that understanding:

✔ correct

✔ consistent

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ unbiased

✔ up-to-date

✔ machine-verifiable

When you control your entity, you control your destiny inside AI.