Intro

Search is no longer a list of links. In 2025, it is:

✔ personalized

✔ conversational

✔ predictive

✔ knowledge-driven

✔ AI-generated

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

This shift from ranking pages to generating answers has created a new category of risk:

Privacy and data protection in LLM-driven search.

Large Language Models (LLMs) — ChatGPT, Gemini, Copilot, Claude, Perplexity, Mistral, Apple Intelligence — now sit between your brand and the user. They decide:

-

what information to show

-

what personal data to use

-

what inferences to make

-

what sources to trust

-

what “safe answers” look like

This introduces legal, ethical, and strategic risks for marketers.

This guide explains how LLM-driven search handles data, what privacy laws apply, how models personalize answers, and how brands can protect both users and themselves in the new search landscape.

1. Why Privacy Matters More in LLM Search Than Traditional Search

Traditional search engines:

✔ return static links

✔ use lightweight personalization

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ rely on indexed pages

LLM-driven search:

✔ generates answers tailored to each user

✔ can infer sensitive characteristics

✔ may combine multiple data sources

✔ can hallucinate personal facts

✔ can misrepresent or reveal private details

✔ uses training data that may include personal information

This creates new privacy risks:

-

❌ unintended data exposure

-

❌ contextual inference (revealing things never said)

-

❌ profiling

-

❌ inaccurate personal information

-

❌ cross-platform data blending

-

❌ unverified claims about individuals or companies

And for brands, the legal implications are enormous.

2. The Three Types of Data LLM Search Processes

To understand the risks, you need to know what “data” means in LLM systems.

A. Training Data (Historical Learning Layer)

This includes:

✔ web crawl data

✔ public documents

✔ books

✔ articles

✔ open datasets

✔ forum posts

✔ social content

Risk: personal data may unintentionally appear in training sets.

B. Retrieval Data (Real-Time Source Layer)

Used in:

✔ RAG (Retrieval-Augmented Generation)

✔ vector search

✔ AI Overviews

✔ Perplexity Sources

✔ Copilot references

Risk: LLMs may retrieve and surface sensitive data in responses.

C. User Data (Interaction Layer)

Collected from:

✔ chat prompts

✔ search queries

✔ personalization signals

✔ user accounts

✔ location data

✔ device metadata

Risk: LLMs may personalize answers too aggressively or infer sensitive traits.

3. The Privacy Laws That Govern LLM-Driven Search (2025 Update)

AI search is regulated by a patchwork of global laws. Here are the ones marketers must understand:

1. EU AI Act (Strictest for AI Search)

Covers:

✔ AI transparency

✔ training data documentation

✔ opt-out rights

✔ personal data protection

✔ model risk classification

✔ provenance requirements

✔ anti-hallucination obligations

✔ synthetic content labeling

LLM search tools operating in the EU must meet these standards.

2. GDPR (Still the Backbone of Global Privacy)

Applies to:

✔ personal data

✔ sensitive data

✔ profiling

✔ automated decision-making

✔ right to erasure

✔ right to rectification

✔ consent requirements

LLMs processing personal data must comply.

3. California CCPA / CPRA

Expands rights to:

✔ opt-out of data sale

✔ delete personal data

✔ restrict data sharing

✔ prevent automated decision profiling

AI search engines fall under CPRA’s “automated systems.”

4. UK Data Protection Act & AI Transparency Rules

Requires:

✔ meaningful explanation

✔ accountability

✔ safe AI deployment

✔ personal data minimization

5. Canada’s AIDA (Artificial Intelligence and Data Act)

Focuses on:

✔ responsible AI

✔ privacy-by-design

✔ algorithmic fairness

6. APAC Privacy Laws (Japan, Singapore, Korea)

Emphasize:

✔ watermarking

✔ transparency

✔ consent

✔ safe data flows

4. How LLM Search Personalizes Content (And the Privacy Risk Behind It)

AI search personalization goes far beyond keyword matching.

Here’s what models use:

1. Query Context + Session Memory

LLMs store short-term context to improve relevance.

Risk: Unintentional links between unrelated queries.

2. User Profiles (Logged-In Experiences)

Platforms like Google, Microsoft, Meta may use:

✔ history

✔ preferences

✔ behavior

✔ demographics

Risk: Inferences can reveal sensitive traits.

3. Device Signals

Location, browser, OS, app context.

Risk: Location-based insights may inadvertently reveal identity.

4. Third-Party Data Integrations

Copilots for enterprise may use:

✔ CRM data

✔ emails

✔ documents

✔ internal databases

Risk: Cross-contamination between private and public data.

5. The Five Major Privacy Risks for Brands

Brands must understand how AI search can unintentionally create problems.

1. Misrepresentation of Users (Inference Risk)

LLMs may:

-

assume user characteristics

-

infer sensitive traits

-

personalize answers inappropriately

This can create discrimination risk.

2. Exposure of Private or Sensitive Data

AI may reveal:

-

outdated information

-

cached data

-

misinformation

-

private facts from scraped datasets

Even if unintentional, the brand may be blamed.

3. Hallucinations About Individuals or Companies

LLMs may invent:

-

revenue numbers

-

customer counts

-

founders

-

employee details

-

user reviews

-

compliance credentials

This creates legal exposure.

4. Incorrect Attribution or Source Blending

LLMs may:

✔ mix data from multiple brands

✔ merge competitors

✔ misattribute quotes

✔ blend product features

This leads to brand confusion.

5. Data Leakage Through Prompts

Users may accidentally provide:

✔ passwords

✔ PII

✔ confidential details

✔ trade secrets

AI systems must prevent re-exposure.

6. The Brand Protection Framework for LLM-Driven Search (DP-8)

Use this eight-pillar system to mitigate privacy risks and protect your brand.

Pillar 1 — Maintain Extremely Clean, Consistent Entity Data

Inconsistent data increases hallucination and privacy exposure.

Update:

✔ Schema

✔ Wikidata

✔ About page

✔ product descriptions

✔ author metadata

Consistency reduces risk.

Pillar 2 — Publish Accurate, Machine-Verifiable Facts

LLMs trust content that:

✔ is factual

✔ has citations

✔ uses structured summaries

✔ includes Q&A blocks

Clear facts prevent AI from improvising.

Pillar 3 — Avoid Publishing Unnecessary Personal Data

Never publish:

✘ internal team emails

✘ employee private info

✘ sensitive customer data

LLMs ingest everything.

Pillar 4 — Maintain GDPR-Compliant Consent and Cookie Flows

Especially for:

✔ analytics

✔ tracking

✔ AI-driven personalization

✔ CRM integrations

LLMs cannot legally process personal data without a valid basis.

Pillar 5 — Strengthen Your Privacy Policy for AI-Era Compliance

Your policy must now include:

✔ how AI tools are used

✔ whether content feeds LLMs

✔ data retention practices

✔ user rights

✔ AI-generated personalization disclosures

Transparency reduces legal risk.

Pillar 6 — Reduce Ambiguity in Product Descriptions

Ambiguity leads to hallucinated features. Hallucinated features often include privacy-invasive claims you never made.

Be explicit about:

✔ what you collect

✔ what you don’t collect

✔ how you anonymize data

✔ retention windows

Pillar 7 — Regularly Audit AI Outputs About Your Brand

Monitor:

✔ ChatGPT

✔ Gemini

✔ Copilot

✔ Perplexity

✔ Claude

✔ Apple Intelligence

Identify:

-

privacy misstatements

-

invented compliance claims

-

false data collection accusations

Submit corrections proactively.

Pillar 8 — Build a “Privacy-First” SEO Architecture

Your website should:

✔ avoid overcollection

✔ minimize unnecessary scripts

✔ use server-side tracking where possible

✔ avoid leaking PII via URLs

✔ secure API endpoints

✔ protect gated content

The cleaner your data, the safer LLM summaries become.

7. The Role of Retrieval (RAG) in Privacy-Safe AI Search

RAG systems reduce privacy risks because they:

✔ rely on live citations

✔ avoid storing sensitive data long term

✔ support source-level control

✔ allow real-time correction

✔ reduce hallucination risk

However, they can still surface:

✘ outdated

✘ inaccurate

✘ misinterpreted

information.

Thus:

retrieval helps, but only if your content is up-to-date and structured.

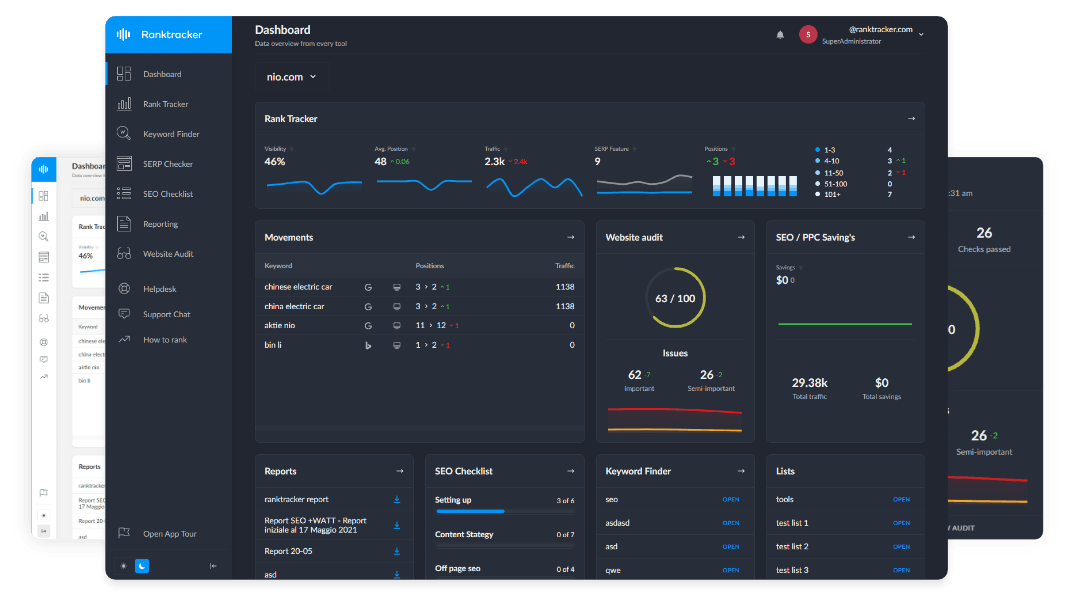

8. Ranktracker’s Role in Privacy-Aware LLM Optimization

Ranktracker supports privacy-safe, AI-friendly content through:

Web Audit

Identifies metadata exposure, orphaned pages, outdated information, and schema inconsistencies.

SERP Checker

Shows entity connections that influence AI model inference.

Backlink Checker & Monitor

Strengthens external consensus — decreasing hallucination risk.

Keyword Finder

Builds clusters that reinforce factual authority, reducing AI improvisation.

AI Article Writer

Produces structured, controlled, non-ambiguous content ideal for privacy-safe ingestion.

Ranktracker becomes your privacy-aware optimization engine.

Final Thought:

Privacy Isn’t a Restriction — It’s a Competitive Advantage

In the AI era, privacy isn’t simply compliance. It’s:

✔ brand trust

✔ user safety

✔ legal protection

✔ LLM stability

✔ algorithmic favorability

✔ entity clarity

✔ citation accuracy

LLMs reward brands that are:

✔ consistent

✔ transparent

✔ privacy-safe

✔ well-structured

✔ verifiable

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ up-to-date

The future of AI-driven search requires a new mentality:

Protect the user. Protect your data. Protect your brand — inside the model.

Do that, and AI will trust you. And when AI trusts you, users will, too.