Intro

The surge in artificial intelligence and data-driven applications has made local large language models (LLMs) and large-scale web crawlers essential tools for many businesses. These technologies power everything from advanced customer service chatbots to comprehensive market analysis tools, but they come with significant infrastructure demands. Companies seeking to deploy these systems locally must carefully consider server requirements to ensure performance, scalability, and security.

LLMs require high computational power and memory to process and generate human-like text efficiently. Meanwhile, large-scale crawlers need robust networking capabilities and storage solutions to navigate, index, and analyze vast portions of the internet. Understanding these demands is crucial for organizations aiming to leverage AI and data insights effectively.

The global AI hardware market is projected to reach $91 billion by 2027, highlighting the rapid growth in demand for specialized server components for AI applications. This growth reflects the increasing importance of robust server infrastructure in supporting AI workloads, particularly for local deployments of LLMs and web crawlers.

Core Server Components for Local LLMs

Local deployment of LLMs involves replicating models typically hosted on cloud infrastructure. This shift toward on-premises servers is driven by factors such as data privacy concerns, latency reduction, and cost management.

CPU and GPU Requirements

LLMs extensively utilize GPUs for training and inference due to their parallel processing capabilities. A server running local LLMs should have multiple high-end GPUs, such as NVIDIA A100 or H100 series, which offer thousands of CUDA cores and substantial VRAM. These GPUs accelerate matrix operations fundamental to deep learning.

In addition to GPUs, multi-core CPUs are essential for managing data preprocessing, orchestration of tasks, and supporting GPU operations. Servers typically require at least 16 to 32 CPU cores to avoid bottlenecks during intensive workloads.

Enterprises using on-premises AI infrastructure report up to a 30% reduction in latency compared to cloud deployments, enhancing real-time application performance. This improvement underlines the importance of powerful local servers equipped with appropriate CPUs and GPUs to meet demanding AI workloads.

Memory and Storage

LLMs consume large amounts of RAM to store model parameters and intermediate data during processing. Servers often need 256 GB or more of RAM, depending on model size. For example, GPT-3-sized models require substantial memory bandwidth to operate efficiently.

Storage is another critical factor. Fast NVMe SSDs are preferred to handle large datasets and model checkpoints quickly. Persistent storage must be scalable and reliable, as training and inference datasets can reach multiple terabytes.

Networking and Cooling

High-speed networking is vital when operating distributed LLMs across multiple servers. Infiniband or 100 Gbps Ethernet connections reduce latency and improve data throughput between nodes.

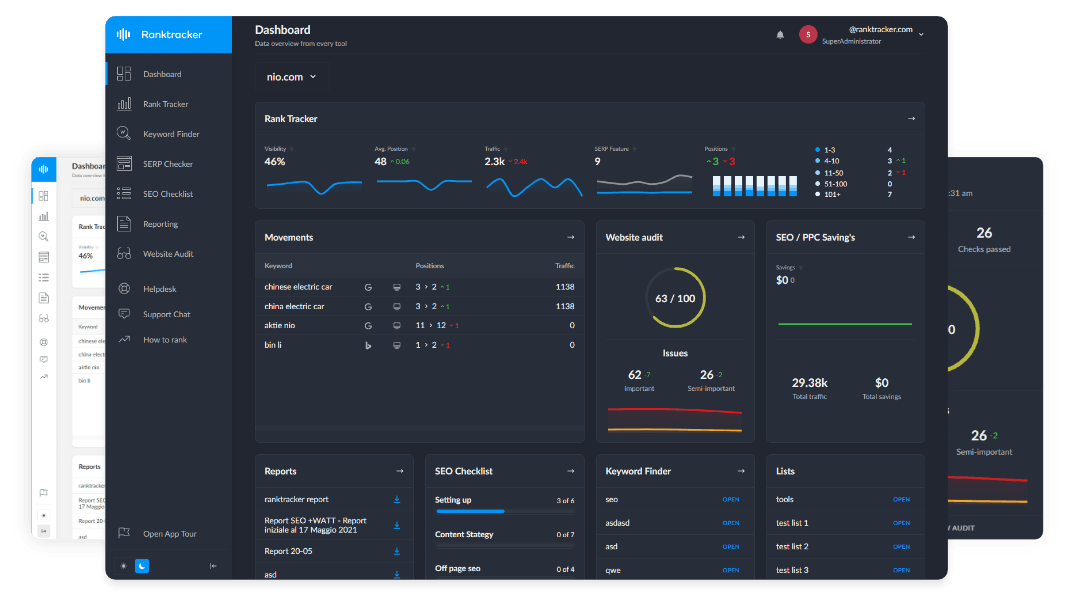

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Intensive GPU operations generate considerable heat; therefore, specialized cooling solutions, including liquid cooling or advanced air cooling, are necessary to maintain hardware longevity and performance.

Security and Maintenance Considerations for Local AI Infrastructure

Security is paramount when dealing with sensitive data and critical infrastructure. Servers must incorporate robust firewalls, intrusion detection systems, and regular patch management.

Many organizations partner with trusted cybersecurity providers to safeguard their environments. For example, Nuvodia's industry experience offers tailored cybersecurity services that help protect critical server infrastructure from evolving threats.

Routine maintenance and monitoring are equally essential to ensure uptime and detect hardware failures early. Collaborating with computer support experts at Virtual IT can provide businesses with expert IT support to manage server health and optimize performance.

Infrastructure for Large-Scale Web Crawlers

Running large-scale crawlers requires a different set of server capabilities focused on network efficiency, storage management, and fault tolerance.

Bandwidth and Network Stability

Web crawlers continuously send and receive data from thousands or millions of web pages. This process demands servers with high-bandwidth internet connections to avoid throttling and maintain crawl speed. Redundant internet links are also advisable to ensure uptime.

Storage and Data Management

Storing the vast amount of crawled data requires scalable and distributed storage systems. Using a combination of high-capacity HDDs for raw data and SSDs for indexing and quick access is common practice.

Large-scale web crawlers can generate petabytes of data annually, necessitating scalable storage solutions to manage this volume effectively. This massive data generation underscores the importance of carefully architected storage systems to handle both capacity and performance demands.

Efficient data compression and deduplication techniques help optimize storage utilization, reducing costs and improving retrieval times.

Processing Power and Scalability

Crawlers parse and process web data in real time, necessitating powerful CPUs with multiple cores. Unlike LLMs, GPUs are less critical for crawling tasks unless integrating AI-driven content analysis.

Clustering servers and using container orchestration platforms such as Kubernetes enable horizontal scaling, allowing the crawler infrastructure to grow dynamically as data volume increases.

Additional Factors Impacting Server Choice

Power Consumption and Cost

High-performance servers consume significant power, which impacts operational costs and facility requirements. Energy-efficient components and power management strategies can mitigate these expenses.

Environmental Impact

Sustainable data center practices, such as using renewable energy sources and optimizing cooling systems, are increasingly important. Organizations should consider these factors when designing their server infrastructure.

Compliance and Data Sovereignty

Running LLMs and crawlers locally may be driven by regulatory requirements concerning data sovereignty and privacy. Understanding compliance obligations is critical to selecting appropriate server locations and configurations.

The Future of Server Infrastructure for AI and Crawling

As AI models continue to grow in size and complexity, server infrastructure must evolve accordingly. Innovations such as specialized AI accelerators, improved cooling technologies, and more efficient network fabrics will shape the future landscape.

Furthermore, hybrid cloud models combining local and cloud resources offer flexibility, cost optimization, and scalability without sacrificing control.

Conclusion

Deploying local large language models and large-scale web crawlers demands a comprehensive understanding of server requirements spanning processing power, memory, storage, networking, and security. Selecting the right infrastructure ensures optimal performance and scalability, enabling businesses to harness the full potential of AI and data analytics.

By aligning technical needs with expert support and cybersecurity measures, companies can build resilient, efficient server environments. Leveraging the insights and services of providers like can significantly streamline this process, helping organizations meet the challenges of modern AI deployments confidently.