Intro

For 20 years, �“readability” meant optimizing for humans:

-

shorter sentences

-

simpler language

-

fewer walls of text

-

clearer subheadings

But in 2025, readability has a second meaning — arguably the more important one:

Machine readability: how LLMs, generative engines, and AI search systems parse, chunk, embed, and understand your content.

Traditional readability helps visitors. Machine readability helps:

-

ChatGPT Search

-

Google AI Overviews

-

Perplexity

-

Gemini

-

Copilot

-

vector databases

-

retrieval-augmented LLMs

-

semantic search layers

If humans like your writing, that’s good. If machines understand your writing, that’s visibility.

This guide breaks down how to structure content so that AI systems can interpret it cleanly, extract meaning correctly, and reuse it confidently inside generative answers.

1. What “Machine Readability” Actually Means in 2025

Machine readability is not formatting. It is not accessibility. It is not keyword placement.

Machine readability is:

Structuring content so machines can divide it into clean chunks, embed it correctly, recognize its entities, and attach each meaning block to the right concepts.

If machine readability is strong → LLMs retrieve your content, cite you, and reinforce your brand in their internal knowledge representations.

If machine readability is weak → your content enters the vector index as noise — or doesn’t get embedded at all.

2. How LLMs Parse Your Content (Technical Overview)

Before we structure content, we need to understand how it is processed.

LLMs interpret a page in four stages:

Stage 1 — Structural Parsing

The model identifies:

-

headings

-

paragraph boundaries

-

lists

-

tables (if present)

-

code blocks

-

semantic HTML tags

This determines chunk boundaries.

Stage 2 — Chunking

The content is broken into block-sized segments (usually 200–500 tokens).

Chunking must:

-

respect topic boundaries

-

avoid mixing unrelated concepts

-

stay aligned with headings

Bad formatting leads to blended chunks → inaccurate embeddings.

Stage 3 — Embedding

Each chunk becomes a vector — a multi-dimensional meaning representation.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Embedding clarity depends on:

-

coherent topic focus

-

distinct headings

-

clean paragraphs

-

clear entity references

-

absence of dead space or filler

-

consistent terminology

This step determines whether the model understands the content.

Stage 4 — Semantic Linking

The model connects your vectors to:

-

entities

-

related concepts

-

existing knowledge

-

other content chunks

-

the global knowledge graph

Strong structure = strong semantic linkages.

Weak structure = model confusion.

3. The Core Principles of Machine-Readable Content

There are seven principles shared by all AI-first content architectures.

Principle 1 — One Concept Per Section

Each H2 should represent exactly one conceptual unit.

Wrong:

“Structured Data, SEO Benefits, and Schema Types”

Correct:

“What Structured Data Is”

“Why Structured Data Matters for SEO” “Key Schema Types for AI Systems”

LLMs learn better when each section has one meaning vector.

Principle 2 — Hierarchy That Mirrors Semantic Boundaries

Your headings (H1 → H2 → H3) become the scaffolding for:

-

chunking

-

embedding

-

retrieval

-

entity mapping

This makes your H2/H3 structure the most important part of the entire page.

If the hierarchy is clear → embeddings follow it. If it’s sloppy → embeddings bleed across topics.

Principle 3 — Definition-First Writing

Every concept should begin with:

-

✔ a definition

-

✔ a one-sentence summary

-

✔ the canonical meaning

This is essential for LLMs because:

-

definitions anchor embeddings

-

summaries improve retrieval scoring

-

canonical meaning stabilizes entity vectors

You are training the model.

Principle 4 — Short, Intent-Aligned Paragraphs

LLMs hate long blocks. They confuse topic boundaries.

Ideal paragraph length:

-

2–4 sentences

-

unified meaning

-

no topic shifts

Every paragraph should produce a clean vector slice.

Principle 5 — Lists and Steps for Procedural Meaning

Lists are the clearest way to enforce:

-

chunk separation

-

clean embeddings

-

procedural structure

AI engines often extract:

-

steps

-

lists

-

bullet chains

-

Q&A

-

ordered reasoning

These are perfect retrieval units.

Principle 6 — Predictable Section Patterns

Use:

-

definition

-

why-it-matters

-

how-it-works

-

examples

-

advanced use

-

pitfalls

-

summary

This creates a content rhythm that AI systems parse reliably.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Consistency improves retrieval scoring.

Principle 7 — Entity Consistency

Consistency = clarity.

Use the exact same:

-

brand names

-

product names

-

concept names

-

feature names

-

definitions

-

descriptions

LLMs downweight entities that shift terminology.

4. The Machine-Readable Page Architecture (The Blueprint)

Here’s the complete architecture you should use for AI-first content.

1. H1 — Clear, Definitional, Entity-Specific Title

Examples:

-

“How LLMs Crawl and Index the Web Differently from Google”

-

“Schema, Entities, and Knowledge Graphs for LLM Discovery”

-

“Optimizing Metadata for Vector Indexing”

This anchors the page meaning.

2. Intro — Context + Why It Matters

This must do two things:

-

set user context

-

set model context

Models use introductions as:

-

global summaries

-

topic priming

-

chunking guidance

3. Section Structure — H2 = Concept, H3 = Subconcept

Ideal layout:

H2 — Concept H3 — Definition H3 — Why It Matters H3 — How It Works H3 — Examples H3 — Pitfalls

This produces highly consistent embedding blocks.

4. Q&A Blocks for Retrieval

LLMs love Q&A because they map directly to user queries.

Example:

Q: What makes content machine-readable? A: Predictable structure, stable chunking, clear headings, defined concepts, and consistent entity usage.

These become “retrieval magnets” in semantic search.

5. Summary Sections (Optional but Powerful)

Summaries give:

-

reinforcement

-

clarity

-

better embeddings

-

higher citation rates

Models frequently extract summaries for generative answers.

5. How Specific Structural Elements Affect LLM Processing

Let’s break down each element.

H1 Tags Influence Embedding Anchors

The H1 becomes the global meaning vector.

A vague H1 = weak anchor. A precise H1 = powerful anchor.

H2 Tags Create Chunk Boundaries

LLMs treat each H2 as a major semantic unit.

Sloppy H2s → messy embeddings. Clear H2s → clean embedding partitions.

H3 Tags Create Sub-Meaning Vectors

H3s ensure each concept flows logically from the H2.

This reduces semantic ambiguity.

Paragraphs Become Vector Slices

LLMs prefer:

-

short

-

self-contained

-

topic-focused paragraphs

One idea per paragraph = ideal.

Lists Encourage Retrieval

Lists become:

-

high-priority chunks

-

easy retrieval units

-

fact clusters

Use more lists.

FAQs Improve Generative Inclusion

FAQs map directly to:

-

AI Overview answer boxes

-

Perplexity direct answers

-

ChatGPT Search inline citations

FAQs are the best “inner micro-chunks” on a page.

Schema Turns Structure Into Machine Logic

Schema reinforces:

-

content type

-

author

-

entities

-

relationships

This is mandatory for LLM visibility.

6. Formatting Mistakes That Break Machine Readability

Avoid these — they destroy embeddings:

- ❌ Huge paragraphs

Chunking becomes unpredictable.

- ❌ Mixed concepts in one section

Vectors become noisy.

- ❌ Misleading H2s

Chunk boundaries break.

- ❌ Tables used instead of paragraphs

Tables embed poorly. Models lose context.

- ❌ Inconsistent terminology

Entities split across multiple vectors.

- ❌ Overly creative section names

LLMs prefer literal headings.

- ❌ Lack of definition-first writing

Embeddings lose anchor points.

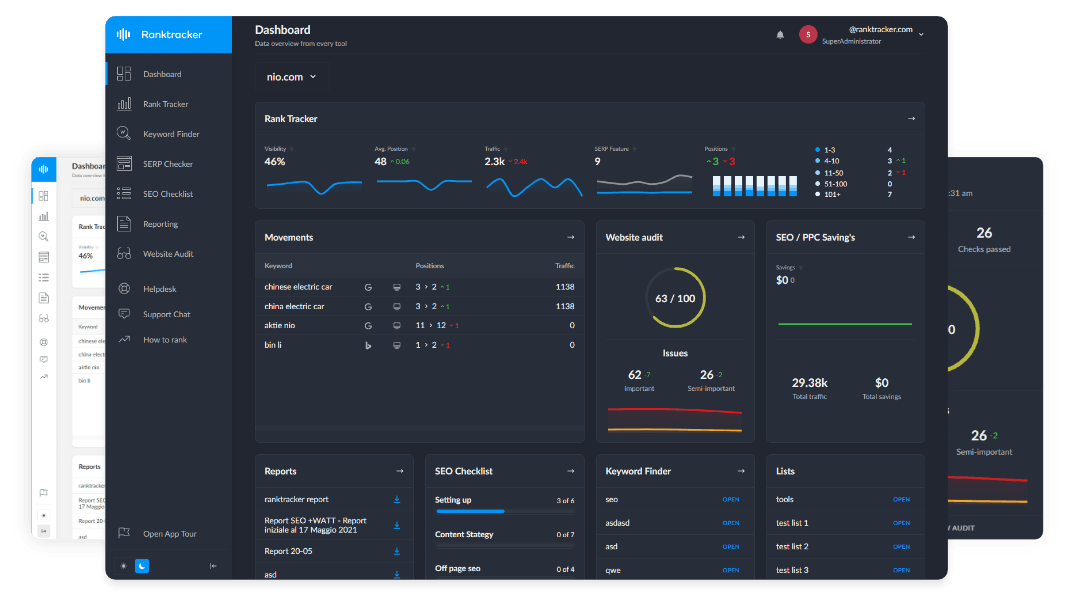

7. How Ranktracker Tools Support Machine Readability

Not promotional — functional alignment.

Web Audit

Detects structural issues:

-

missing headings

-

improper hierarchy

-

large blocks of text

-

missing schema

Keyword Finder

Identifies question-based formats that align with:

-

FAQs

-

LLM-ready sections

-

definitional content

SERP Checker

Shows extraction patterns Google prefers — patterns that AI Overviews often copy.

AI Article Writer

Produces clean structure that machines parse predictably.

Final Thought:

Machine Readability Is the New SEO Foundation

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

The future of visibility is not “ranking” — it is being understood.

LLMs don’t reward:

-

keyword density

-

clever formatting

-

artistic writing

They reward:

-

clarity

-

structure

-

definitions

-

stable entities

-

clean chunking

-

semantic consistency

If users love your writing, that’s good. If machines understand your writing, that’s power.

Structure is the bridge between human comprehension and AI comprehension.

When your content is machine-readable, you don’t just win SEO — you win the entire AI discovery ecosystem.