Intro

In production AI systems, the integrity of training data, whether real or synthetic, is a direct determinant of model reliability, policy compliance, and behavioral consistency under operational conditions. For enterprises deploying AI in regulated or high-stakes environments, synthetic data generation must meet the same operational standards as real-world datasets: consistent performance, regulatory compliance, and fidelity to the production conditions models will encounter. Synthetic data addresses privacy constraints and data availability gaps, but only when it preserves the statistical distributions, edge-case frequencies, and behavioral patterns that production models depend on for reliable performance.

Synthetic datasets require the same validation discipline applied to other production inputs. Without structured verification, synthetic datasets risk encoding patterns that satisfy statistical tests in isolation while collapsing edge-case distributions or introducing spurious correlations. These distortions propagate into model behavior, distorting decision boundaries, amplifying bias signals, or producing policy-violating outputs under real-world edge conditions. Validation determines whether synthetic data meets the quality threshold required for use in supervised fine-tuning pipelines and whether it can be treated as a governed, production-grade input rather than an experimental substitute.

Defining Pattern Fidelity

Pattern fidelity refers to how closely synthetic datasets reproduce the distributions, relationships, and edge behaviors found in real-world data. This extends beyond surface similarity. Enterprises must assess whether correlations, anomaly frequencies, and decision-relevant signals are preserved across scenarios.

For example, a financial risk model trained on synthetic transactions must reflect real fraud patterns, not merely replicate aggregate transaction volume. Validation frameworks compare synthetic outputs against production benchmarks using performance thresholds, consistency checks, and controlled sampling strategies. The objective is not realism for its own sake, but operational alignment with real business behavior.

Structured Evaluation Frameworks

Synthetic datasets require the same evaluation discipline applied to machine learning models. Benchmarking must occur at multiple levels: assessing the synthetic dataset itself for distributional fidelity and evaluating the downstream model trained on it for behavioral alignment with production performance thresholds. Accuracy, robustness, and bias metrics reveal distortions or coverage gaps introduced by synthetic inputs, identifying where the training signal diverges from production-representative patterns before deployment exposure.

Red teaming must also be applied at the data level. Domain experts stress-test synthetic datasets through edge-case simulation and adversarial scenario generation to surface overrepresentation of rare cases, demographic coverage gaps, or attribute combinations that would not plausibly occur in production environments.

These evaluation outputs feed directly into lifecycle governance controls, determining whether synthetic datasets are approved for retraining pipelines or require regeneration before entering production systems. Synthetic data validation therefore becomes an iterative governance function repeated across training cycles, model versions, and operational changes to ensure that dataset fidelity remains aligned with evolving production requirements.

Human Oversight and Expert Review

Statistical tests evaluate distributional properties but cannot determine whether synthetic data is operationally meaningful in context. They cannot assess whether datasets reflect realistic decision environments, satisfy regulatory plausibility standards, or capture the behavioral edge cases that matter in production systems.

Domain experts are therefore embedded within the validation pipeline to assess operational plausibility, regulatory compliance, and behavioral consistency. Human-in-the-loop validation operates through structured calibration cycles in which reviewers evaluate synthetic outputs against defined quality criteria and flag distributional anomalies, compliance gaps, and plausibility failures for corrective regeneration.

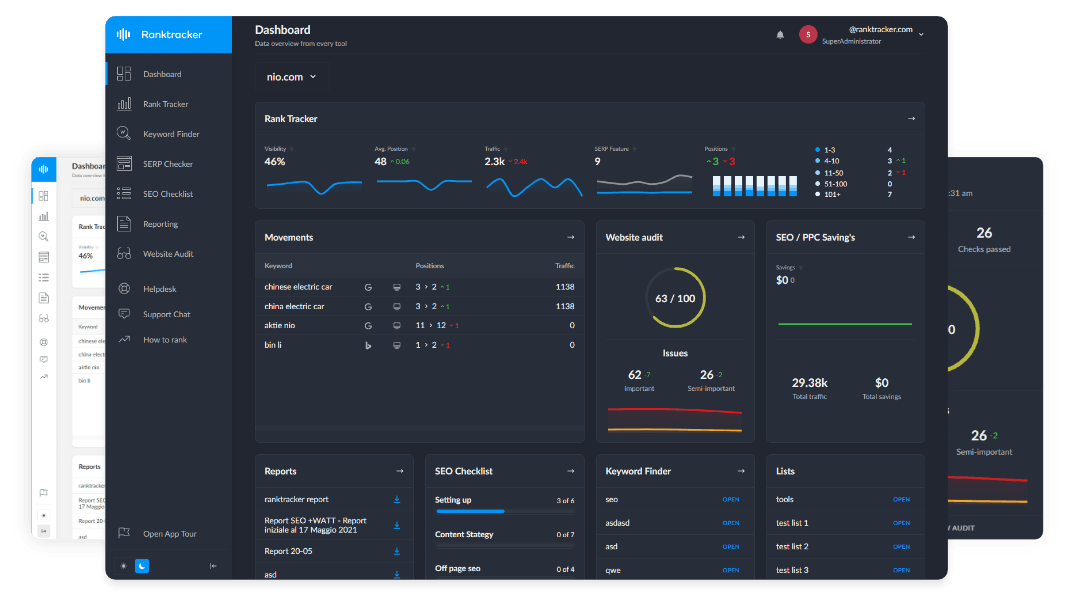

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

These review cycles prevent distributional drift between synthetic datasets and real operating conditions, maintaining alignment as business requirements, regulatory expectations, and real-world data patterns evolve.

When synthetic data meets validated quality thresholds, it can be integrated into supervised fine-tuning pipelines under the same governance controls applied to production data: version-controlled, annotated against defined evaluation criteria, and subject to ongoing quality assurance loops.

Governance Integration Across the Lifecycle

Validation does not conclude at the point of initial dataset approval. Synthetic data must be monitored continuously across retraining cycles and evolving business conditions through drift detection, sampling audits, and performance re-evaluation against current production benchmarks.

In mature AI programs, synthetic data are governed as production infrastructure subject to version control, structured documentation, and refinement workflows tied directly to deployment monitoring and retraining cycles. These controls ensure synthetic data remains within defined policy boundaries and risk tolerance thresholds as deployment conditions evolve, not only at the point of initial validation but across the full operational lifecycle.

Conclusion

Synthetic data is not a substitute for governance; it is a governed input class with its own validation requirements, quality thresholds, and lifecycle controls. Pattern fidelity cannot be assumed from statistical plausibility alone. It must be verified against the production conditions models will encounter.

Structured evaluation frameworks, human expert review, and continuous monitoring are the mechanisms that make synthetic data operationally reliable. They surface distributional failures before they reach training pipelines, maintain alignment as business and regulatory conditions evolve, and produce the audit trail required for responsible AI deployment.

Organizations that govern synthetic data with the same rigor applied to production data are the ones capable of scaling training pipelines without scaling risk. That is the operational standard required for enterprise AI systems.