Intro

Search is no longer defined by ranking algorithms alone. AI Overviews rewrite Google results. ChatGPT Search delivers answers without requiring a single click. Perplexity synthesizes entire industries into concise summaries. Gemini blends live retrieval with multi-modal reasoning.

In this new landscape, it no longer matters whether you rank #1 — it matters whether AI includes you at all.

This shift has created a new discipline, a successor to SEO and AIO:

LLM Optimization (LLMO)

the practice of shaping how Large Language Models understand, represent, retrieve, and cite your brand.

If SEO optimized for crawlers, and AIO optimized for AI-readability, LLMO optimizes for the intelligence layer running the entire discovery ecosystem.

This article defines LLMO, explains how it works, and shows how marketers can use it to dominate generative search across Google AI Overviews, ChatGPT Search, Gemini, Copilot, and Perplexity.

1. What Is LLM Optimization (LLMO)?

LLM Optimization (LLMO) is the process of improving your brand’s visibility inside Large Language Models by strengthening how they:

-

understand your content

-

represent your entities in embedding space

-

retrieve your pages during answer generation

-

select your site as a citation source

-

summarize your content accurately

-

compare you with competitors during reasoning

-

maintain your brand across future updates

LLMO is not about “ranking.” It’s about becoming part of the AI model’s internal memory and retrieval ecosystem.

This is the new optimization layer above SEO and AIO.

2. Why LLMO Exists (And Why It’s Not Optional)

Traditional SEO optimized for:

-

keywords

-

backlinks

-

crawlability

-

content structure

Then AIO optimized for:

-

machine readability

-

structured data

-

entity clarity

-

factual consistency

But starting in 2024–2025, AI search engines — ChatGPT Search, Gemini, Perplexity — began relying primarily on model-based understanding, not just web-based signals.

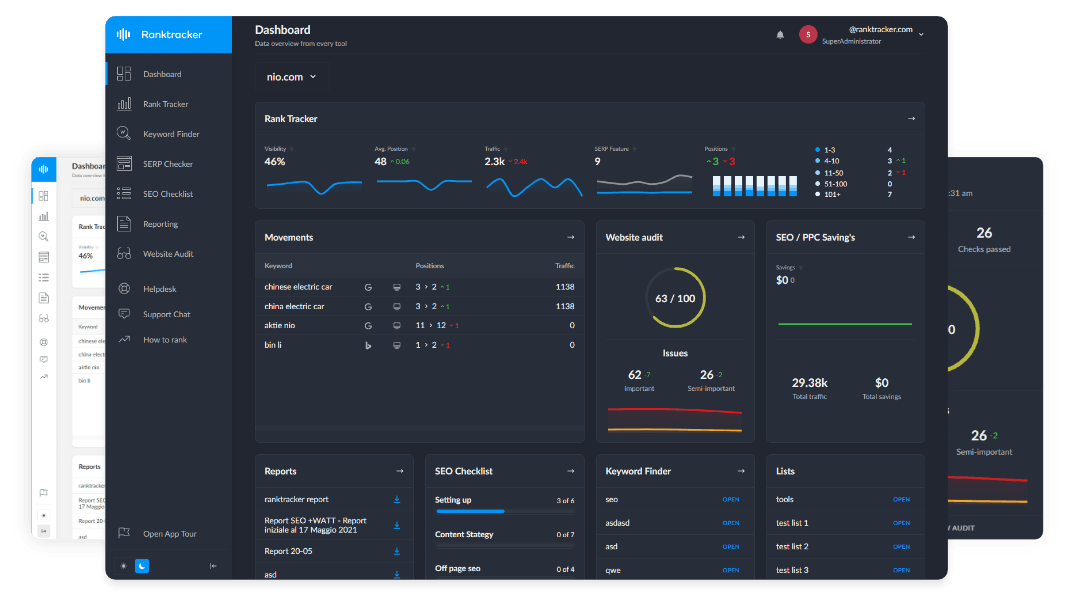

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

That demands a new layer:

LLMO = optimizing your brand’s presence inside AI models themselves.

Why it matters:

✔ AI search is replacing web search

✔ citations replace rankings

✔ vector similarity replaces keyword matching

✔ entities replace HTML signals

✔ embeddings replace indexation

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ consensus replaces backlinks as the primary truth signal

✔ retrieval replaces SERPs

LLM optimization is about influencing how the models think, not just how they read.

3. The Three Pillars of LLMO

LLMO is built on three systems inside modern LLMs:

1. Internal Embedding Space (the model’s memory)

2. Retrieval Systems (the model’s “live reading” layer)

3. Generative Reasoning (how the model forms answers)

To optimize for LLMs, you must influence all three layers.

Pillar 1 — Embedding Optimization (Semantic Identity Layer)

LLMs store knowledge as vectors — mathematical meaning-maps.

Your brand, products, content topics, and factual claims all live inside embedding space.

You win LLM visibility when:

✔ your entity embeddings are clear

✔ your topics cluster tightly

✔ your brand sits close to relevant concepts

✔ your factual signals remain stable

✔ your backlinks reinforce semantic meaning

You lose LLM visibility when:

✘ your branding is inconsistent

✘ your facts contradict each other

✘ your site structure is confusing

✘ your topics are thin

✘ your content is ambiguous

Strengthening embeddings = strengthening AI memory of your brand.

Pillar 2 — Retrieval Optimization (AI Reading Layer)

LLMs use retrieval systems to access fresh data:

-

RAG (Retrieval-Augmented Generation)

-

citation engines

-

semantic search

-

re-ranking systems

-

Google’s Search+LLM hybrid

-

Perplexity’s multi-source pull

-

ChatGPT Search live queries

LLMO focuses on making your content:

-

easy for AI to retrieve

-

easy to parse

-

easy to extract answers from

-

easy to compare

-

easy to cite

This requires:

-

schema

-

canonical definitions

-

factual summaries

-

Q&A formatting

-

strong internal linking

-

authoritative backlinks

-

consistent topic depth

Pillar 3 — Reasoning Optimization (AI Decision Layer)

This is the most misunderstood part of LLMO.

When an AI answers a question, it doesn’t just retrieve pages. It reasons:

-

Are these facts consistent?

-

Who is the most authoritative source?

-

Which brand is mentioned across multiple trusted sites?

-

Which definition matches the consensus?

-

Which explanation is canonical?

-

Which domain is stable, factual, and clear?

You optimize for reasoning by:

-

reinforcing your definitions across multiple pages

-

earning backlinks from consistent authoritative sources

-

cleaning contradictory claims

-

producing canonical content clusters

-

being the most structured source on the topic

-

establishing entity clarity everywhere

When AI reasons, your goal is to become the default answer source.

4. The Difference Between SEO, AIO, GEO, and LLMO

Here is the complete hierarchy:

SEO

→ Optimize for Google’s ranking algorithms (crawlers + index)

AIO

→ Optimize for AI readability and machine comprehension

GEO

→ Optimize specifically for generative answer citation

LLMO

→ Optimize for the model’s internal memory, vector space, and reasoning system

LLMO = everything upstream of citations. It dictates:

-

how you appear in embeddings

-

whether you show up in RAG

-

how models summarize your content

-

what the AI “thinks” about your brand

-

how future updates represent you

It is the deepest and most powerful optimization layer.

5. How LLMs Choose Which Websites to Cite

Citations are the #1 output of LLMO.

LLMs choose sources based on:

1. Semantic Alignment

Does the content match the query in meaning?

2. Canonical Strength

Is this a stable, authoritative explanation?

3. Factual Consensus

Do other sources confirm this information?

4. Structured Clarity

Is the content easy for AI to extract?

5. Entity Trust

Is this brand consistent across the web?

6. Backlink Confirmation

Do high-authority sites reinforce this brand/topic?

7. Freshness

Is the information up to date?

LLMO directly optimizes for all 7 factors.

6. The Five-Step Framework for LLM Optimization (LLMO)

Step 1 — Canonicalize Your Core Topics

Create the clearest, most definitive explanations on the internet for your domain.

This strengthens:

-

embeddings

-

consensus

-

semantic alignment

Ranktracker’s AI Article Writer helps generate structured, canonical pages.

Step 2 — Strengthen Entity Identity

Make your brand, authors, and products unambiguous:

-

consistent naming

-

Organization schema

-

Author schema

-

FAQ and HowTo schema

-

clear definitions in the first 100 words

-

stable internal linking

Ranktracker’s SERP Checker helps identify competing entity relationships.

Step 3 — Build Deep Topical Clusters

Clusters create semantic gravity:

-

AI retrieves you more

-

embeddings become tighter

-

reasoning favors your content

-

citations become more likely

Clusters are the core of LLMO.

Step 4 — Improve Authority Signals

Backlinks still matter — but not for rankings.

They matter because they:

-

stabilize embeddings

-

confirm facts

-

strengthen consensus

-

elevate domain trust

-

increase vector prominence

Ranktracker’s Backlink Checker and Backlink Monitor are essential here.

Step 5 — Align Content With AI Extraction Patterns

LLMs extract answers better when pages include:

-

Q&A format

-

short summaries

-

structured bullet lists

-

definition-first paragraphs

-

schema markup

-

factual clarity

Ranktracker’s Web Audit identifies readability issues that harm AI extraction.

7. Why LLMO Is the Future of SEO

Because SEO is no longer about:

❌ keywords

❌ rankings

❌ on-page tricks

❌ link sculpting

Modern discovery is driven by:

-

✔ embeddings

-

✔ vectors

-

✔ reasoning

-

✔ retrieval

-

✔ consensus

-

✔ citation selection

-

✔ entity identity

-

✔ canonical structure

Search engines are becoming LLM-driven platforms.

Your website is no longer competing for 10 links. You’re competing for one AI answer.

LLMO positions your brand to win that answer.

Final Thought:

The Future of Visibility Belongs to Brands That Models Understand

If SEO was about helping search engines find you, and AIO was about helping AI read you, LLMO is about helping AI remember you, trust you, and choose you.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

In the era of generative search:

Visibility is not a ranking — it is a representation inside AI.

LLLMO is how you shape that representation.

Brands that master LLMO now will dominate the next decade of discovery.