Intro

Large Language Models are only as good as the data they learn from.

A model trained on messy, inconsistent, duplicated, contradictory, or low-quality data becomes:

-

less accurate

-

less trustworthy

-

more prone to hallucination

-

more inconsistent

-

more biased

-

more fragile in real-world contexts

This affects everything — from how well an LLM answers questions, to how your brand is represented inside AI systems, to whether you are selected for generative answers in Google AI Overviews, ChatGPT Search, Perplexity, Gemini, and Copilot.

In 2025, “data cleanliness” isn’t just an internal ML best practice.

It is a strategic visibility issue for every company whose content is consumed by LLMs.

If your data is clean → models treat you as a reliable source. If your data is messy → models downweight, ignore, or misinterpret you.

This guide explains why data cleanliness matters, how it affects model training, and how brands can use it to strengthen their presence across AI-driven discovery.

1. What “Data Cleanliness” Actually Means in LLM Training

It’s not just:

-

correct spelling

-

well-written paragraphs

-

clean HTML

Data cleanliness for LLMs includes:

-

✔ factual consistency

-

✔ stable terminology

-

✔ consistent entity descriptions

-

✔ absence of contradictions

-

✔ low ambiguity

-

✔ structured formatting

-

✔ clean metadata

-

✔ schema accuracy

-

✔ predictable content patterns

-

✔ removal of noise

-

✔ correct chunk boundaries

In other words:

**Clean data = stable meaning.

Dirty data = chaotic meaning.**

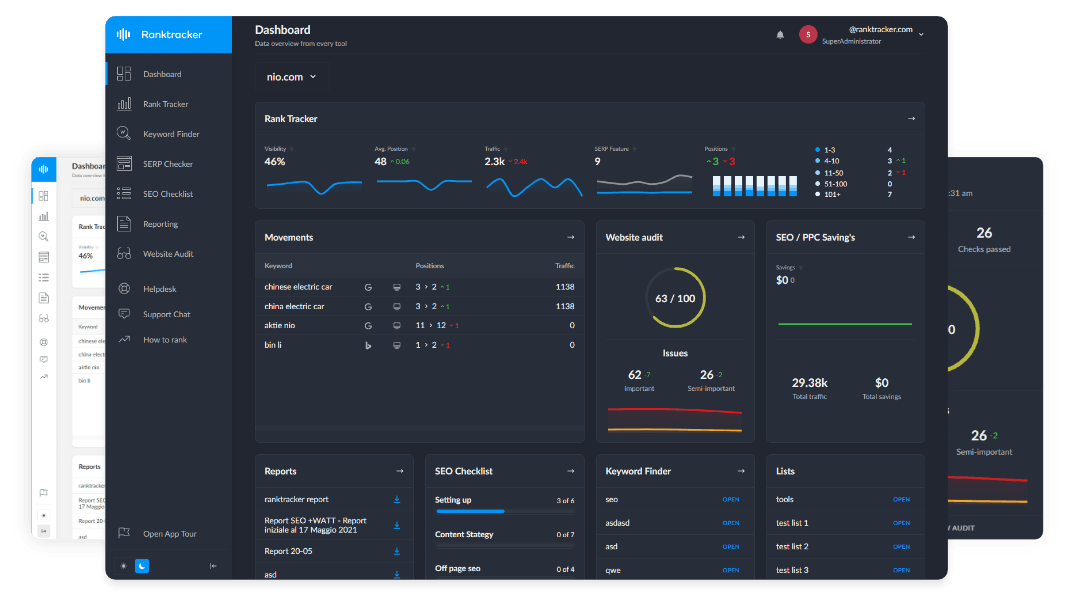

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

If meaning is inconsistent, the model forms:

-

conflicting embeddings

-

weak entities

-

broken relationships

-

incorrect assumptions

These persist for the entire life of the model.

2. How Dirty Data Corrupts Model Training at Every Layer

LLM training has four major stages. Dirty data hurts all of them.

Stage 1 — Pretraining (Massive, Foundational Learning)

Dirty data at this stage leads to:

-

incorrect entity associations

-

misunderstood concepts

-

poor definition boundaries

-

hallucination-prone behavior

-

misaligned world models

Once baked into the foundation model, these errors are very hard to undo.

Stage 2 — Supervised Fine-Tuning (Task-Specific Instruction Training)

Dirty training examples cause:

-

poor instruction following

-

ambiguous interpretations

-

incorrect answer formats

-

lower accuracy in Q&A tasks

If the instructions are noisy, the model generalizes the noise.

Stage 3 — RLHF (Reinforcement Learning from Human Feedback)

If human feedback is inconsistent or low-quality:

-

reward models become confused

-

harmful or incorrect outputs get reinforced

-

confidence scores become misaligned

-

reasoning steps become unstable

Dirty data here affects the entire chain of reasoning.

Stage 4 — RAG (Retrieval-Augmented Generation)

RAG relies on:

-

clean chunks

-

correct embeddings

-

normalized entities

Dirty data leads to:

-

incorrect retrieval

-

irrelevant context

-

faulty citations

-

incoherent answers

Models produce wrong answers because the underlying data is wrong.

3. What Happens to LLMs Trained on Dirty Data

When a model learns from dirty data, several predictable errors appear.

1. Hallucinations Increase Dramatically

Models hallucinate more when:

-

facts contradict each other

-

definitions drift

-

entities lack clarity

-

information feels unstable

Hallucinations are often not “creative mistakes” — they’re the model attempting to interpolate between messy signals.

2. Entity Representations Become Weak

Dirty data leads to:

-

ambiguous embeddings

-

inconsistent entity vectors

-

confused relationships

-

merged or misidentified brands

This directly affects how AI search engines cite you.

3. Concepts Lose Boundaries

Models trained on messy definitions produce:

-

blurry meaning

-

vague answers

-

misaligned context

-

inconsistent reasoning

Concept drift is one of the biggest dangers.

4. Bad Information Gets Reinforced

If dirty data appears frequently, models learn:

-

that it must be correct

-

that it represents consensus

-

that it should be prioritized

LLMs follow the statistical majority — not the truth.

5. Retrieval Quality Declines

Messy data → messy embeddings → poor retrieval → poor answers.

4. Why Data Cleanliness Matters for Brands (Not Just AI Labs)

Data cleanliness determines how LLMs:

-

interpret your brand

-

classify your products

-

summarize your company

-

cite your content

-

generate answers involving you

AI engines select the sources that look:

-

✔ consistent

-

✔ trustworthy

-

✔ unambiguous

-

✔ structured

-

✔ clean

Dirty branding → poor LLM visibility.

Clean branding → strong LLM understanding.

5. The Five Types of Data Cleanliness That Matter Most

Dirty data takes many forms. These five are the most damaging.

1. Terminology Inconsistency

Example:

- Ranktracker → Rank Tracker → Ranktracker.com → Rank-Tracker

LLMs interpret these as different entities.

This fractures your embeddings.

2. Contradictory Definitions

If you define something differently across pages, LLMs lose:

-

factual confidence

-

meaning boundaries

-

retrieval precision

This affects:

-

AIO

-

GEO

-

LLMO

-

AI citations

3. Duplicate Content

Duplicates create noise.

Noise creates:

-

conflicting vectors

-

ambiguous relationships

-

lower confidence

Models downweight pages that repeat themselves.

4. Missing or Ambiguous Schema

Without schema:

-

entities aren’t clearly defined

-

relationships aren’t explicit

-

authorship is unclear

-

product definitions are vague

Schema is data cleanliness for machines.

5. Poor Formatting

This includes:

-

huge paragraphs

-

mixed topics

-

unclear headers

-

broken hierarchy

-

HTML errors

-

messy metadata

These break chunking and corrupt embeddings.

6. How Data Cleanliness Improves Training Outcomes

Clean data improves models in predictable ways:

1. Stronger Embeddings

Clean data = clean vectors.

This improves:

-

semantic accuracy

-

retrieval relevance

-

reasoning quality

2. Better Entity Stability

Entities become:

-

clear

-

consistent

-

durable

LLMs rely heavily on entity clarity for citations.

3. Reduced Hallucinations

Clean data eliminates:

-

contradictions

-

mixed signals

-

unstable definitions

Less confusion → fewer hallucinations.

4. Better Alignment with Human Expectations

Clear data helps LLMs:

-

follow instructions

-

give predictable answers

-

mirror domain expertise

5. More Accurate Generative Search Results

AI Overviews and ChatGPT Search prefer clean, consistent sources.

Clean data = higher generative inclusion.

7. How to Improve Data Cleanliness for AI Systems

Here is the full framework for maintaining clean, LLM-friendly data across your site.

Step 1 — Standardize All Definitions

Every primary concept should have:

-

one definition

-

one description

-

one location

-

one set of attributes

Definitions = embedding anchors.

Step 2 — Create an Entity Glossary for Internal Use

Every entity needs:

-

canonical name

-

aliases

-

primary description

-

schema type

-

relationships

-

examples

This prevents drift.

Step 3 — Reinforce Entities with JSON-LD

Structured data clarifies:

-

identity

-

relationships

-

attributes

This stabilizes vectors.

Step 4 — Clean Up Internal Linking

Links should form:

-

clean clusters

-

predictable hierarchies

-

strong semantic relationships

Internal linking affects how vectors group.

Step 5 — Reduce Content Redundancy

Remove:

-

duplicated paragraphs

-

repeated concepts

-

boilerplate text

Less noise = cleaner embeddings.

Step 6 — Maintain Formatting Standards

Use:

-

short paragraphs

-

consistent H2/H3 hierarchy

-

minimal fluff

-

clear boundaries

-

readable code blocks for examples

LLMs depend on structure.

Step 7 — Remove Conflicting Data Across Channels

Check:

-

LinkedIn

-

Wikipedia

-

Crunchbase

-

directories

-

reviews

LLMs cross-reference these.

8. Why AI Search Engines Reward Clean Data

Google AI Overviews, ChatGPT Search, Perplexity, and Gemini all prioritize content that is:

-

structurally clean

-

semantically consistent

-

entity-stable

-

metadata-rich

-

contradiction-free

Because clean data is:

-

easier to retrieve

-

easier to embed

-

easier to summarize

-

safer to use

-

less likely to hallucinate

Dirty data gets filtered out.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Clean data gets reused — and cited.

Final Thought:

Data Cleanliness Isn’t a Technical Task — It’s the Foundation of AI Visibility

Dirty data confuses models. Clean data trains them.

Dirty data breaks embeddings. Clean data stabilizes them.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Dirty data reduces citations. Clean data increases them.

Dirty data sabotages your brand. Clean data strengthens your position inside the model.

In an AI-driven search world, visibility doesn’t come from keyword tricks. It comes from being:

-

consistent

-

structured

-

factual

-

unambiguous

-

machine-readable

Data cleanliness isn’t maintenance — it’s competitive advantage.

The brands with the cleanest data will own the AI discovery layer for the rest of the decade.