Intro

Most SEO problems are not content issues. These are structural inconsistencies. A page can be perfectly written, well-researched, and properly formatted. However, it may still never rank because Google cannot crawl or index it correctly. At scale, these issues multiply fast and become nearly impossible to manage manually.

This is where modern tooling changes the game. An AI SEO website optimization platform gives teams a chance to identify, prioritize, and fix crawling and indexing issues across thousands of pages. You can do all that without spending weeks in spreadsheets or waiting on developer queues.

Why Crawling and Indexing Problems Are So Common

Technical debt is a silent killer of large websites. The redirect chain was added two years ago. An XML sitemap was not being updated following a CMS migration. Wrongly directed canonical tags were not set up properly. None of these is urgent on its own. However, they combine to form a crawl budget nightmare that stifles rankings for entire sections of the site.

Search engines have a limited crawl budget for each domain. When that budget is wasted on redirect loops, duplicate content, or blocked resources, important pages get crawled less frequently. New content will take longer to be indexed. Changes to existing pages can take weeks to show up in search results.

The issue escalates with scale. If you have 10,000 pages on your site, you have 10,000 potential points of failure. Manual audits only identify a small proportion of them. And by the time an issue is found, reported, and fixed, the damage has already accumulated.

How AI Changes the Audit Process

Traditional SEO audits are snapshots of a point in time. You do a crawl, export a report, prioritize issues, and pass them on for fixes. Then you wait. Then you do another crawl. The cycle is designed to be slow and reactive.

AI-driven platforms change that to a continuous monitoring model. Crawling occurs automatically at a set time. Issues are detected as they emerge. Priority scores are based on traffic impact. Technical severity is not the only thing that is considered. Teams understand what to fix and why.

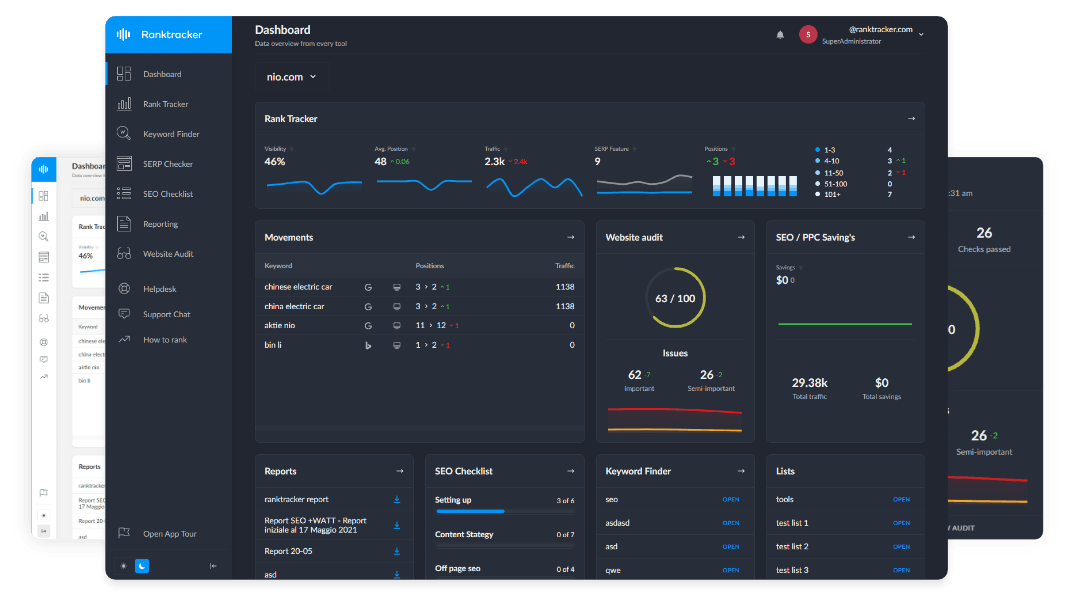

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Crawl budget is particularly important for large sites that are updated regularly. The recommendations are simple in principle. You need to minimize duplicate URLs, eliminate redirect chains, and keep sitemaps clean. Doing them consistently on a large site without automated tooling is another matter.

Indexing Issues Are Harder to Spot Than Crawling Issues

A crawling problem is fairly easy to spot. A page returns a 404, a redirect chain is too long, a robots.txt rule is too broad. These appear in regular audit tools. Indexing issues are more nuanced. A page is crawled and not indexed. Google sees it, rates it, and does not add it to search results. There are several reasons for this. This can happen due to thin content, duplicate signals, poor internal linking, soft 404 responses, or just low perceived quality compared to other pages.

When it comes to scale, it is a matter of correlating several data points at once to determine why certain pages are not being indexed. Crawl data, Google Search Console signals, internal link graphs, and content quality signals are important. No human analyst can keep all of this in their head for ten thousand pages at once.

AI platforms automatically link these signals. They reveal patterns that individual audits don't. A group of pages that have similar indexing issues typically has a common structural root cause, such as a template problem, a category page structure, or a faceted navigation configuration that creates too many low-value pages. It takes weeks to manually find that root cause. It is identified by an AI platform in hours.

Fixing Issues at Scale Requires More Than Detection

Identifying issues is the first step. The challenge for most teams is fixing them across a large site without breaking other things. When a site-wide template is changed, thousands of pages are adjusted at once. An update to a sitemap should show the current indexing priorities, not simply a list of URLs.

AI-powered platforms can assist with this as well. It is not only about making changes automatically. It is more about making the impact of changes predictable before they are deployed. Teams are empowered to take more action and make more decisions with scenario modeling, affected page counts, and priority queuing.

On the vast majority of websites audited at scale, there are technical SEO issues. The difference between sites that fix them systematically and sites that fix them reactively is a direct competitive advantage in organic search.

The Compounding Value of Continuous Optimization

Crawling and indexing health is not a one-time project. It is an ongoing operational requirement. Sites change constantly. Think about new pages, updated templates, CMS migrations, and new market expansions. Each change introduces new potential issues. Teams that run continuous technical SEO monitoring compound their advantages over time.

Issues get caught earlier. Fixes get deployed faster. Google crawls the site more efficiently. New content indexes faster. Rankings stabilize and grow more predictably. This is the core value proposition of a modern AI SEO website optimization platform. And the main goal here is not a better audit report. It is a fundamentally different operational model for managing technical SEO at scale.