Intro

AI detectors are getting smarter. So are the tools built to beat them. Here's what actually works in 2026, tested, measured, and explained without the marketing spin.

You pasted your content into GPTZero. It came back 97% AI-generated. You rewrote the intro, added a personal anecdote, swapped some words around. Ran it again. 94%. You spent another twenty minutes editing. 89%. At some point you realized you'd spent more time trying to make AI content look human than it would have taken to write the thing from scratch.

Sound familiar? That frustrating loop is exactly why AI humanization tools exist. But most people misunderstand what they do, how they work, and which approaches actually beat modern detectors. Let's fix that.

How AI Detectors Actually Work (The 2-Minute Version)

Before you can beat something, you need to understand how it thinks. AI detectors don't read your content and "judge" whether a human wrote it. They run statistical analysis on two primary features:

Perplexity measures how predictable your word choices are. When you write naturally, you make unexpected choices constantly. You pick the weird synonym. You start a sentence with "Look." You throw in a dash where a comma would work fine. AI models optimize for the most probable next word, which produces text that's statistically "too smooth." Low perplexity = probably AI.

Burstiness measures variation in sentence structure and length. Human writing is erratic. You'll write a 40-word sentence loaded with clauses, then follow it with a fragment. Then a question. Then another long one. AI output tends to produce sentences within a narrow length range, with similar structural patterns throughout. Low burstiness = probably AI.

Modern detectors like Turnitin, GPTZero, Originality.ai, and Copyleaks combine these with additional features: syntactic tree depth, discourse coherence patterns, lexical diversity curves, and paragraph-level structural signatures. Some, like Turnitin's August 2025 update, specifically target text that has been processed by humanization tools, looking for artifacts that low-quality humanizers leave behind.

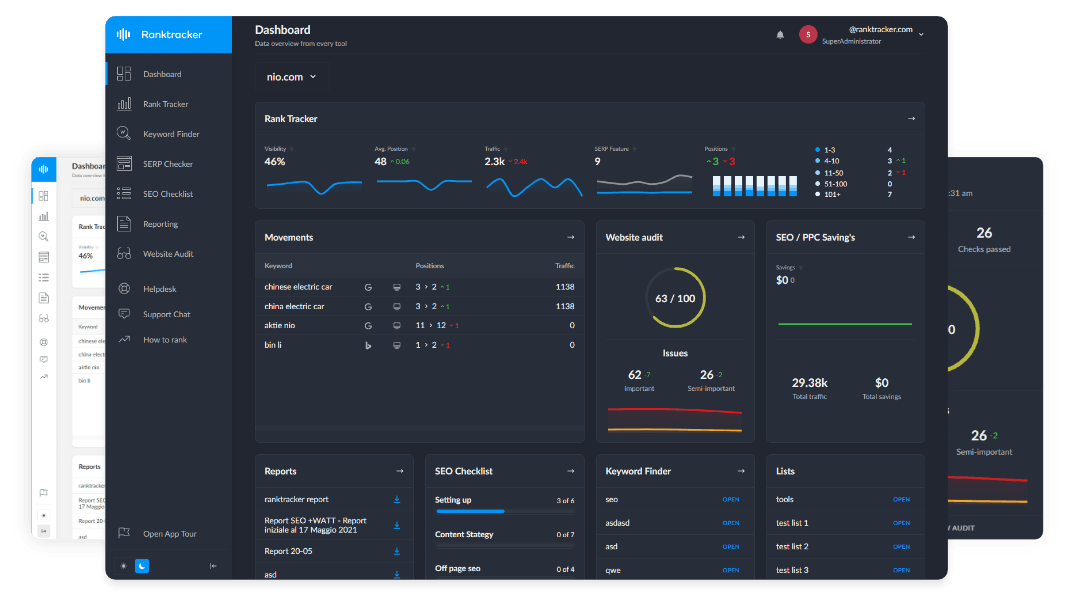

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

The key insight: detectors don't analyze what you said. They analyze how you said it. Two articles making the exact same argument can score completely differently depending on their statistical profiles.

Why Manual Editing Doesn't Work (And the Data That Proves It)

The instinct most people have is to manually edit AI content until it passes. Add some personality. Throw in a typo. Change some words. This approach fails, and research explains why.

The Perkins et al. (2024) study tested 114 text samples against seven popular AI detectors. On unaltered AI text, accuracy was 39.5%. When basic adversarial techniques were applied (manual edits, paraphrasing, word swapping), accuracy dropped to 17.4%. That sounds great until you realize the false positive rate on human-written text was 15%. The detectors weren't getting fooled by the edits. They were becoming unreliable in both directions. Some edited AI text still got caught. Some human text got flagged. The edits didn't systematically solve the problem. They just added noise.

Here's why. When you manually edit AI content, you're changing surface features: specific words, maybe sentence order, adding a phrase here and there. But the underlying statistical distributions (the perplexity profile across the whole document, the burstiness pattern, the structural signatures) remain largely intact. You'd need to rewrite 60-80% of the text to meaningfully shift these distributions. At that point, you've essentially written it yourself.

Paraphrasing tools have the same limitation. They swap words systematically but preserve sentence structure and paragraph rhythm. The RAID benchmark from the University of Pennsylvania (the largest AI detection study ever, covering 6 million+ text samples) confirmed that paraphrasing provides inconsistent protection. Sometimes it works. Often it doesn't. And you can't predict which outcome you'll get.

What AI Humanization Actually Does (It's Not Paraphrasing)

There's a fundamental difference between paraphrasing and humanization, and confusing the two is why people get frustrated when "humanized" content still gets flagged.

A paraphraser takes your text and rephrases it. Different words, similar structure. The statistical fingerprint changes minimally. Think of it as putting a different shirt on the same person. The face is still recognizable.

An AI humanizer restructures the text at the statistical pattern level. It adjusts the actual perplexity and burstiness distributions to match profiles typical of human-written content. The meaning and arguments stay intact, but the mathematical signature that detectors measure gets fundamentally altered. This is more like changing the person's gait, posture, and mannerisms. Not just their clothes.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Tools like UndetectedGPT work on this deeper level. They don't just swap "utilize" for "use" and call it a day. They restructure how predictable each section of text is, introduce natural variation in sentence rhythm, and adjust the kind of structural patterns that detectors flag. The output reads naturally because it statistically resembles natural writing.

This matters because modern detectors have gotten wise to surface-level tricks. Turnitin's 2025 bypasser detection update specifically targets the artifacts that cheap humanizers leave behind: unnatural synonym substitution patterns and preserved deep structure beneath changed surface words. A tool that only changes the surface will get caught by these newer detection methods. A tool that changes the underlying statistics won't, because there's nothing anomalous left for the detector to find.

Step-by-Step: How to Humanize AI Content Effectively

Here's the workflow that consistently produces content scoring as human-written across multiple detectors.

Step 1: Generate Your Base Content

Use whatever AI tool you prefer (ChatGPT, Claude, Gemini, Llama). Focus on getting the information, structure, and arguments right. Don't worry about "sounding human" at this stage. Let the AI do what it's good at: producing comprehensive, well-organized content quickly.

Pro tip: Give the AI a specific angle, not just a topic. "Write about AI detection" produces generic content. "Explain why AI detection false positives are a bigger problem than most people realize, with specific research citations" produces something with actual substance.

Step 2: Add What AI Can't

Before humanizing, add elements that only you can provide:

- Original data or observations. Did you test something yourself? Include the results. Real numbers from real tests are impossible to fake and impossible to AI-generate.

- Specific experience. "In our testing across 50 samples..." beats "many users have found that..." every time.

- Genuine opinions. AI hedges. Humans take positions. If you think a tool is overpriced, say so. If a method doesn't work, say that.

- Current references. AI training data has a cutoff. Adding references to recent events, studies, or product updates signals freshness that AI can't replicate.

This step isn't just about beating detectors. It's about making your content actually valuable. Humanization tools optimize the statistical profile, but they can't inject expertise that isn't there.

Step 3: Run Through a Humanization Tool

This is where you beat AI detectors systematically rather than guessing with manual edits. Paste your edited draft and let the tool restructure the statistical patterns. The process takes seconds, not minutes. What comes out should read naturally, maintain your meaning, and score as human-written across major detectors.

Step 4: Verify Across Multiple Detectors

Don't just check one detector. Your content might encounter GPTZero, Originality.ai, Copyleaks, or Turnitin depending on the context. Run your humanized content through at least two or three. If it passes all of them, you're good. If one flags it, humanize again or adjust the flagged section manually.

Step 5: Final Human Read

Read it once more yourself. Not for detection purposes, but for quality. Does it flow? Does it make sense? Does it sound like something you'd actually say? Humanization tools are sophisticated, but a quick human review catches the occasional awkward phrasing that any automated tool might produce.

What the Research Says About Humanization Effectiveness

Let's look at this from the evidence side, not the marketing side.

The Weber-Wulff et al. (2023) study, published in the International Journal for Educational Integrity, tested 14 AI detection tools against various types of content. All 14 scored below 80% accuracy. When paraphrasing was involved, accuracy dropped further. The study noted that "the available detection tools are neither accurate nor reliable."

The RAID benchmark (2024) went bigger: 6 million+ AI-generated texts, 11 models, 8 domains, 11 adversarial attack types. Detectors trained on one model's output were "mostly useless" against other models. And most detectors became "completely ineffective" when false positive rates were constrained below 0.5%.

What these studies consistently show is that AI detection has a ceiling, and that ceiling is lower than the marketing materials claim. Sophisticated humanization works with that ceiling rather than against it. By adjusting text to fall within the statistical range where detectors can't confidently distinguish AI from human, humanization tools exploit a fundamental limitation that no amount of detector improvement can fully resolve.

That's not a vulnerability that will be patched. It's a mathematical reality. As language models produce increasingly human-like text, the overlap between "AI statistical profile" and "human statistical profile" grows. Humanization tools simply accelerate that convergence for your specific content.

AI Detection in 2026: What's Changed

The detection landscape has shifted meaningfully since 2024. Here's what matters:

Turnitin added AI bypasser detection in August 2025, specifically targeting text processed by humanization tools. It also introduced AI paraphrasing detection for word spinners. Both are English-only. Their accuracy on modified AI content, per independent testing, drops to 20-63%. A meaningful gap from their claimed 98%.

GPTZero launched Source Finder, which checks whether cited sources actually exist. This catches a different problem: AI hallucinating fake citations. They also claim 98.6% accuracy against ChatGPT's reasoning models, though this hasn't been independently verified.

Originality.ai pushed major model updates in September 2025 and expanded to 30 languages. They take a responsive retraining approach: when new LLMs launch, they test existing models and retrain only if needed.

Copyleaks expanded to 30+ languages and added AI image detection.

The trend that matters most: detection is getting more sophisticated, but so is humanization. The tools that worked two years ago by simple synonym swapping no longer cut it. The tools that work now operate at the statistical level, and that approach remains effective because it addresses the fundamental mechanism detectors use, not just their current implementation.

Common Mistakes That Get People Caught

After watching this space closely for years, the patterns are clear. Here's what doesn't work:

Using a paraphraser and calling it humanization. QuillBot, Spinbot, and similar tools change words but not statistical patterns. Modern detectors see right through them, especially Turnitin's 2025 bypasser detection.

Editing only the introduction and conclusion. Detectors analyze the entire document. If your middle 1,500 words have a flat perplexity profile while the intro and outro don't, that inconsistency is itself a signal.

Adding random typos or grammatical errors. This is a persistent myth. Detectors don't look for perfect grammar as a signal. They analyze statistical distributions across the whole text. A typo doesn't change your perplexity profile. It just makes your content look sloppy.

Running content through multiple different paraphrasers sequentially. This often produces worse results, not better. Each pass degrades readability while the core statistical signature persists. You end up with text that's both flagged by detectors and unpleasant to read.

Ignoring the content itself. Even if you beat every detector, generic content without original insights, real data, or genuine expertise won't rank, won't engage readers, and won't convert. Humanization is the final polish, not a substitute for substance.

Who Benefits from AI Humanization

Let's be practical about this.

Content marketers and SEO professionals: If you're using AI to scale content production, humanization is essentially insurance. Google's algorithms increasingly reward content that demonstrates E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). Content that reads like AI output (even if Google doesn't explicitly penalize it) tends to underperform on engagement metrics that indirectly affect rankings. Humanization solves this systematically.

Students and academics: AI detectors are notoriously unreliable, especially for non-native English speakers. The Stanford study (Liang et al., 2023) found 61% false positive rates for ESL writers. Students are getting falsely flagged for content they actually wrote themselves. Running your writing through a humanizer protects you against a flawed system that regularly gets it wrong. It's a smart layer of protection, the same way you'd proofread before submitting or use Grammarly to catch errors.

Professional writers using AI for research and drafts: If AI helps you outline and draft, but the ideas, expertise, and final voice are yours, humanization ensures the tool-assisted portions of your workflow don't create detection artifacts in the finished product. This is the equivalent of making sure your camera settings don't distort the photo you actually took.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Casual bloggers or social media posters: You probably don't need humanization. Most social platforms don't run AI detection, and the casual tone of blog posts and social content already naturally diverges from AI patterns.

The Bottom Line

AI detection and AI humanization are locked in an arms race that neither side will definitively win. Detectors get smarter. Humanization tools adapt. The statistical gap between AI and human writing narrows with every model generation.

What works in 2026 is clear: surface-level editing and basic paraphrasing are no longer enough. Effective humanization operates at the statistical level, adjusting the perplexity and burstiness distributions that detectors actually measure. Tools like UndetectedGPT do this systematically, producing results that pass across multiple major detectors.

But no tool replaces substance. The best approach combines AI efficiency for drafting, human expertise for insights and strategy, and humanization for the final statistical polish. That workflow produces content that's fast to create, genuinely valuable, and indistinguishable from human-written text by any current detection method.

The detectors will keep improving. The humanizers will keep adapting. The content that wins is the content that's actually worth reading, regardless of how it was produced.