Intro

Google page ranks have played a vital role in identifying top niche websites. It’s the top search results displayed that has boosted the ranking of many web pages. Certainly there are myriad factors used to rank the results produced by the search engine.

Until 2009, the major factors involved in displaying search engine results were as follows:

-

a) The Content of the web page which is further categorized into text, URL, the heading, and the headers.

-

b) The legitimacy of the website which determines the era of the domain name along with the number and quality of the inbound links within the website.

It was in 2010 that Google came up with a distinctive idea of introducing website speed as a factor which impacts search engine ranking results.

What is Site Speed and why was this chosen as an impacting factor?

Site speed could be the pace taken for a website to load and how long it takes for the content to be viewed by the user. This can result in slow-performing or fast-performing mobile sites. Google’s idea was to let the poor performing websites take a backseat since they give very little importance to the user experience.

Is content still the king for Google?

Google has willfully denied it’s users the insight of page speed that directly impacts the search ranking, but they have no doubt on content. The relevancy of content in a website always remains the champion. The factor that is worth pondering is the relationship between speed metrics and search ranking results since there are innumerable factors involved in the backdrop. However, the identified correlations between the two impacts the results produced.

Google's analysis in search engine rankings

The first part of the study was conducted with a list of 2000 search queries selected randomly from the ranking factors list of 2013. A selective list of single keywords and multi-length keywords extending up to 5 were collected as samples. The top 50 search results along with their URLs were extracted and evaluated with the entire search summed to 100,000 pages.

The second part of the study involved a Web Page Test conducted using an open source tool. This involved loading private instances which are identical on a server, hosted in cloud pointing to a location anywhere across the globe, for instance, a location in the west was chosen. The tool uses browsers accepted by the crowd in large for collecting more than 40 performance parameters about the loading of a web page. Google’s chrome was used for the test with an empty cache to maintain consistency with the results.

Study Results: The study results captured more than 40 distinctive page load metrics for each of the URL tested. Per the study, search ranks are not supposed to be influenced based on the number of open sessions held by a browser uses for loading a page. The results clearly matched the study as there was no notable impact in search ranking based on the number of open connections. Highlighting the noteworthy factors that influenced the ranking of search results with the help of the open source tool:

Page Load Time: The Time Taken For Page Load

When Page load time is contemplated for a website, there are two distinct measures which explain in-depth details to the user.

- a) Document complete time

- b) Fully rendered time

Document complete time means the time taken for any page to load before the user can click on any of the website elements and enter data. For example, enter a website name in the search bar and clicks enter. The website begins to load. The whole content isn't there, but interaction with the website is still possible.

Fully rendered time means the time taken for all elements in the website or web page to load. As we scroll up and down many elements are loaded in the background of the website, for example, the advertisements, images, videos and trackers in a website are loaded until completion.

The study included both the load times for analyzing the search rankings. The relationship of document complete time and fully rendered time was weighed to check the impact it had on search ranking. The surprise twists in the study were to identify no relationship existed between these two key parameters. Google left the spot on page load time open for speculation which helped the study takes new depths.

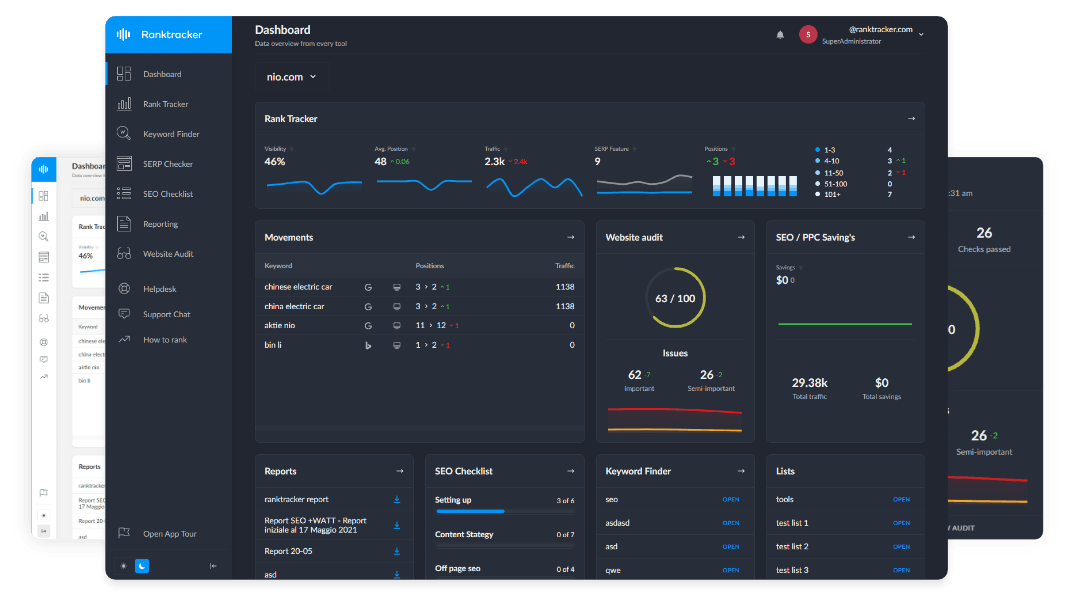

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Representation of the Graph for Page Load Time: The representation of Page Load Time can be put in a graph with the following details:

The Horizontal axis represents the position of the search rank up to 50 ranks

The Vertical axis represents the norm time taken for 2000 randomly selected search terms included in the study.

How to plot the graph for Page load time?

In order to calculate the position of the page, which is highlighted in the horizontal axis, each term from a list of 2000 terms (marked in the vertical axis) used in the study is searched in Google one after the other. The first result of each search is selected and the page load time of every page is evaluated. The mean value is calculated and the graph is populated to point to Position 1. The same process is followed for the rest of the positions up to 50.

Insights of the study: By the logic of search engine tactics the quicker a page renders the quicker a user is satisfied with the results which lead to more promotion. It is clear and evident that the web pages with ranks between 1 and 5 should have lower document complete time and fully rendered time over ranks greater than 5. But Google has surprisingly not allied these factors to improve search rankings.

Time to First Byte (TTFB)

Since ranking of search results and Page load time had nothing much in common, the study took a turn towards Time to First Byte (TTFB). This dimension was calculated with the following aspects in view: This test clearly determines the time taken by a browser to receive the first byte as a response from the web server for a requested URL. When receiving the response many metrics come into play:

- Calculating the network latency in sending a request to the web server from the browser.

- The time that is taken by the web server to process the request.

- The time that is taken by the web server to generate a response.

- The time that is taken to send the first byte of the response from the server to the browser.

Representation of the Graph for Time to First Byte**:

The representation of Time to First Byte can be put in a graph with the following details:

- The Horizontal axis represents the position of the search rank up to 50 ranks The Vertical axis represents the time taken for the response from the server for each request.

How to plot the graph for Time to First Byte?

The position of the page is plotted according to the response time taken by each request to return the first byte. The same procedure is followed for all the 50 ranks.

Insights of the study:

- Clearly there was a close affiliation between time to first byte and position of the search rank. The search rank of a web page decreases when the time taken for the first byte to be returned from the web server to the browser is high.

- If TTFB is lower, the search result ranking is higher

- If TTFB is higher, the search result ranking is lower. The authority in search rankings due to TTFB is the highest throughout the study.

Page Size in Bytes: After promising impact captured in TTFB, the study proceeded to capture Page size in bytes. Each and every web page was calculated in bytes and compared with the positions of the search ranks. Page size signifies all the downloaded elements in a fully rendered page. This includes everything from images and third party widgets to fonts.

Representation of the Graph for Page Size:

-

The representation of Page Size can be put in a graph with the following details: The Horizontal axis represents the position of the search rank up to 50 ranks

-

The Vertical axis represents the Page size in bytes

How to plot the graph for Page Size?

The position of the page is plotted according to the Page Size of each web page in bytes. The same procedure is followed for all the 50 ranks.

Insights of the study: The relationship between search rank positions and page size in bytes brought up a theory involving simple to the complex methodology. Many of the optimized and high ranking sites belong to organizations that are big in nature and involve more resources whereas low-ranking sites belong to smaller organizations with fewer resources. The site complexity of an Organization is dependent on the resources and content of a website.

Imagine comparing a giant retailer website with a startup firm. When there is a decrease in the page size, there is a decrease in the page rank.

Total Image Content in Bytes: With as much as necessary speculation on Page size, the study moved to capture the image content in bytes. All the images loaded in a web page or a website is compared with the search rank position. It is a clear fact that when there are more images in a website, there is a possibility for the website to load slower than usual.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Representation of the Graph for Total Image Content: The representation of Total Image Content can be put in a graph with the following details:

- The Horizontal axis represents the position of the search rank up to 50 ranks

- The Vertical axis represents the Total Image Content in bytes

How to plot the graph for Total Image Content?

The position of the page is plotted according to the total image content of each web page in bytes. The same procedure is followed for all the 50 ranks.

Insights of the study: Per the study not a strong level of association was expected, but it was expected for websites with images to load slower thereby causing impacts in search ranking positions. However, the results for images did not provide any breakthrough in the study, but this concludes the fact that page load time does not influence search ranking positions.

Page Load Time vs. Search Ranking: During the study, it was clearly identified that page load time did not cause a major breakthrough in search ranking results. This holds true for one or two-word length generic keywords as well as for keywords up to 5 words. The websites are quicker in page load timing did not rank higher than the websites which took a longer time to load. This isn’t the key factor that anyone with a website should be worried about. In fact, page speed doesn’t play a vital role even for keyword oriented websites.

TTFB vs. Search Ranking: The only factor in the study to have a major impact in search ranking is the Time to First Byte (TTFB). The lower the time is taken for a response, the higher is the value of the search engine rankings.

Back-end Support in TTFB Ranking: Web sites with excellent back-end infrastructure deliver quicker responses. Ideally, the servers that support a website in the back-end play a significant role in improving the search rank positions. Even after an in-depth analysis of the front-end performance parameters, it’s the back-end website performance parameters that cause a significant impact in a website’s search ranking.

Why is TTFB considered A Vital Metric?

It is easier for Google to capture TTFB as a metric using its website crawlers. Collecting data based on document complete time or fully rendered time requires a browser with full capabilities since it directly impacts the search rankings.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Similarly, design, structure, and content of the website are also dependent on browser capabilities as much as the times calculated. Speed and performance are crucial factors for a website. Google crawlers can capture TTFB metrics leads to more time and effort involved in the search. There are high possibilities for Google to include Page rendering time in the near future as a factor that impacts search rankings since user experience is the key reason for the existence of websites. Calculating TTFB is highly recommended since it is a rational metric used to estimate the performance of the entire site.

The 3 important factors that are directly affected are as follows:

- The network latency between the guest user and the server.

- How loaded the web server is with the massive request list.

- The turnaround time of the website’s back-end to generate content.

Content Distribution Networks (CDN):

Many websites use CDN to lower their network latency. CDNs are known for their efficiency in quickly distributing and delivering content to the users and visitors of the website, irrespective of the geographic location. Many of the highly ranked websites are efficient due to their wise investments in their high capacity back-end servers, CDNs, database layers and effective optimization of their applications.

How much is a Back-end Server Involved in Search Rankings?

Back-end infrastructure helps in websites ranking higher than other sites. In order to maintain their rankings, it is a wise decision to improvise the back-end infrastructure of the quickly growing sites.

The study concluded that sites that have established back-end infrastructure has topped the list and received higher ranks than the websites which care less for their back-end. For example, searching long tailed keywords (4 or 5 words long) returned results from websites owned by smaller organizations with very less audience but has consistent content on the specific search query. The results did not show many high traffic websites. Building a complex environment with very high TTFB is equivalent to not building one at all. TTFB is a consistent parameter which focuses completely on the quick response rather than complexity of the website. This helps in ranking the websites clearly. If the TTFB is lower for a smaller yet quicker website, its search rankings increased whereas if the TTFB is higher for a bigger yet slower website, its rankings are much lower irrespective of the content and the site presence.

Takeaways:

Norms to Be Followed By All Websites:

TTFB is the key parameter to your website’s higher and consistent ranking. Focusing on building the back-end infrastructure is very important for fast and responsive servers which directly impacts search ranking position.

Equally important is the front-end of the website which ultimately captivates the user’s attention and provides an enjoyable experience. The front-end and back-end complete a website. It is important to identify and resolve front-end and back-end problems of websites to improve search engine ranking positions with experts help to benefit on the internet.

Faster websites promote good content along with happy visitors. Happy visitors promote the websites leading to a chain reaction of sharing and linking across the globe!