Intro

For years, AI lived in the cloud.

Models were enormous. Inference was centralized. User data had to be sent to servers. Every interaction flowed through big tech infrastructure.

But in 2026, a major inversion is happening:

AI is moving onto the device.

Phones, laptops, headsets, cars, watches, home hubs — all running local LLMs that:

✔ understand the user

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ work offline

✔ protect privacy

✔ run instantly

✔ integrate with sensors

✔ influence search and recommendations

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ filter information before it reaches the user

This changes everything about:

✔ SEO

✔ AI search

✔ advertising

✔ personalization

✔ discovery

✔ brand visibility

✔ user journeys

On-device LLMs will become the new first filter between users and the internet.

This article explains what they are, how they work, and how marketers must adapt to a world where search begins locally, not globally.

1. What Are On-Device LLMs? (Simple Definition)

An on-device LLM is a language model that runs directly on:

✔ your phone

✔ your laptop

✔ your smartwatch

✔ your car dashboard

✔ your AR/VR headset

—without requiring cloud servers.

This is now possible because:

✔ models are getting smaller

✔ hardware accelerators are improving

✔ techniques like quantization + distillation shrink models

✔ multimodal encoders are becoming efficient

On-device LLMs enable:

✔ instant reasoning

✔ personalized memory

✔ privacy protection

✔ offline intelligence

✔ deep integration with device data

They turn every device into a self-contained AI system.

2. How On-Device LLMs Change the Architecture of Search

Traditional search:

User → Query → Cloud LLM/Search Engine → Answer

On-device LLM search:

User → Local LLM → Filter → Personalization → Cloud Retrieval → Synthesis → Answer

The key difference:

The device becomes the gatekeeper before the cloud ever sees the query.

This radically alters discovery.

3. Why Big Tech Is Shifting to On-Device AI

Four forces are driving this shift:

1. Privacy and regulation

Countries are tightening data laws. On-device AI:

✔ keeps data local

✔ avoids cloud transmission

✔ reduces compliance risk

✔ removes data retention issues

2. Cost reduction

Cloud inference is expensive. Billions of daily queries → huge GPU bills.

On-device AI offloads computation to the user’s hardware.

3. Speed and latency

On-device LLMs provide:

✔ instant results

✔ no server lag

✔ no network dependency

This is essential for:

✔ AR

✔ automotive

✔ mobile

✔ wearables

✔ smart home devices

4. Personalization potential

On-device LLMs can access:

✔ messages

✔ photos

✔ browsing history

✔ behavior patterns

✔ calendars

✔ location

✔ sensor data

Cloud models cannot legally or practically access this.

Local data = deeper personalization.

4. The Big Platforms Going All-In On On-Device LLMs

By 2026, all major players have adopted on-device intelligence:

Apple Intelligence (iOS, macOS)

On-device SLMs process:

✔ language

✔ images

✔ app context

✔ intentions

✔ notifications

✔ personal data

Apple uses the cloud only when absolutely required.

Google (Android + Gemini Nano)

Gemini Nano is fully on-device:

✔ message summarization

✔ photo reasoning

✔ voice assistance

✔ offline tasks

✔ contextual understanding

Search itself is starting on-device before hitting Google’s servers.

Samsung, Qualcomm, MediaTek

Phones now include dedicated:

✔ NPU (Neural Processing Units)

✔ GPU accelerators

✔ AI co-processors

designed specifically for local model inference.

Microsoft (Windows Copilot + Surface hardware)

Windows now runs:

✔ local summarization

✔ local transcription

✔ local reasoning

✔ multi-modal interpretation

without needing cloud models.

5. The Key Shift: On-Device LLMs Become “Local Curators” of Search Queries

This is the critical insight:

Before a query reaches Google, ChatGPT Search, Perplexity, or Gemini — your device will interpret, reshape, and sometimes rewrite it.

Meaning:

✔ your content must match user intent as interpreted by local LLMs

✔ discovery begins on the device, not the web

✔ on-device LLMs act as personal filters

✔ brand visibility is now controlled by local AI systems

Your marketing strategy must now consider:

How does the user’s personal AI perceive your brand?

6. How On-Device LLMs Will Change Discovery

Here are the 11 major impacts.

1. Search Becomes Hyper-Personalized at the Device Level

The device knows:

✔ what the user typed

✔ where they are

✔ their past behavior

✔ their preferences

✔ what content they tend to click

✔ their goals and constraints

The device filters search queries before they’re sent out.

Two users typing the same thing may send different queries to Google or ChatGPT Search.

2. SEO Becomes Personalized Per User

Traditional SEO optimized for a global result set.

On-device AI creates:

✔ personalized SERPs

✔ personalized ranking signals

✔ personalized recommendations

Your visibility depends on how well local LLMs:

✔ understand

✔ trust

✔ and prefer your brand

3. On-Device Models Create Local Knowledge Graphs

Devices will build micro knowledge graphs:

✔ your frequent contacts

✔ your searched brands

✔ past purchases

✔ saved info

✔ stored documents

These influence which brands the device promotes.

4. Private Data → Private Search

Users will ask:

“Based on my budget, which laptop should I buy?” “Why is my baby crying? Here’s a recording.” “Does this look like a scam message?”

This never touches the cloud.

Brands can’t see it. Analytics won’t track it.

Private queries become invisible to traditional SEO.

5. Local Retrieval Augments Web Search

Devices store:

✔ past snippets

✔ previously viewed articles

✔ screenshots

✔ past product research

✔ saved information

This becomes part of the retrieval corpus.

Your older content may resurface if it’s stored locally.

6. On-Device LLMs Will Rewrite Queries

Your original keywords won’t matter as much.

Devices rewrite:

✔ “best CRM” → “best CRM for freelancers using Google Workspace”

✔ “SEO tool” → “SEO tool that integrates with my existing setup”

SEO moves from keywords to goal-level optimization.

7. Paid ads become less dominant

On-device LLMs will suppress or block:

✔ spam

✔ irrelevant offers

✔ low-quality ads

And promote:

✔ contextual relevance

✔ quality signals

✔ user-aligned solutions

This disrupts the ad economy.

8. Voice search becomes the default interaction

On-device LLMs will turn:

✔ spoken queries

✔ ambient listening

✔ camera input

✔ real-time prompts

into search events.

Your content must support conversational and multimodal interactions.

9. Local-first recommendations dominate

Device → Agent → Cloud → Brand NOT Google → Website

The first recommendation happens before search begins.

10. Offline discovery emerges

Users will ask:

“How do I fix this?” “Explain this error message.” “What does this pill bottle say?”

No internet needed.

Your content must be designed to be locally cached and summarized.

11. Multi-modal interpretation becomes standard

Devices will understand:

✔ screenshots

✔ camera photos

✔ videos

✔ receipts

✔ documents

✔ UI flows

SEO content must become multimodally interpretable.

7. What This Means for SEO, AIO, GEO, and LLMO

On-device LLMs change optimization forever.

1. SEO → Local-AI-Aware SEO

You must optimize for:

✔ personalization

✔ rewritten queries

✔ user goals

✔ context-aware reasoning

2. AIO → Local Machine Interpretability

Content must be easy for local LLMs to parse:

✔ clear definitions

✔ structured logic

✔ simple data extraction

✔ explicit entities

✔ answer-first blocks

3. GEO → Generative Engine Optimization expands to on-device models

LLMs will:

✔ use your content locally

✔ cache parts of it

✔ summarize it

✔ compare it with competitors

Your content must be machine-preferred.

4. LLMO → Multi-LLM Optimization (Cloud + Device)

Your content must be:

✔ easily summarizable

✔ interpretably structured

✔ entity-consistent across queries

✔ aligned with persona variants

Local LLMs reward clarity over complexity.

8. How Marketers Should Prepare for On-Device AI

Practical steps:

1. Build content for “local summarization”

This means using:

✔ answer-first paragraphs

✔ Q&A blocks

✔ crisp definitions

✔ bulleted lists

✔ step frameworks

✔ structured reasoning

Local LLMs will skip verbose content.

2. Strengthen brand entity profiles

On-device models rely heavily on entity clarity:

✔ consistent brand naming

✔ schema

✔ Wikidata

✔ product pages

✔ internal linking

Agents prefer brands they understand.

3. Create “goal-centered” content

Because devices rewrite queries, you must optimize for goals:

✔ beginner guides

✔ “how to choose…”

✔ “what to do if…”

✔ troubleshooting

✔ scenario-based pages

4. Focus on trust and credibility signals

Devices will filter low-trust brands.

Required:

✔ E-E-A-T

✔ clear expertise

✔ citations

✔ original data

✔ case studies

5. Support multi-modal interpretation

Include:

✔ annotated images

✔ diagrams

✔ screenshots

✔ product photos

✔ user flows

✔ UI examples

On-device LLMs rely heavily on visual reasoning.

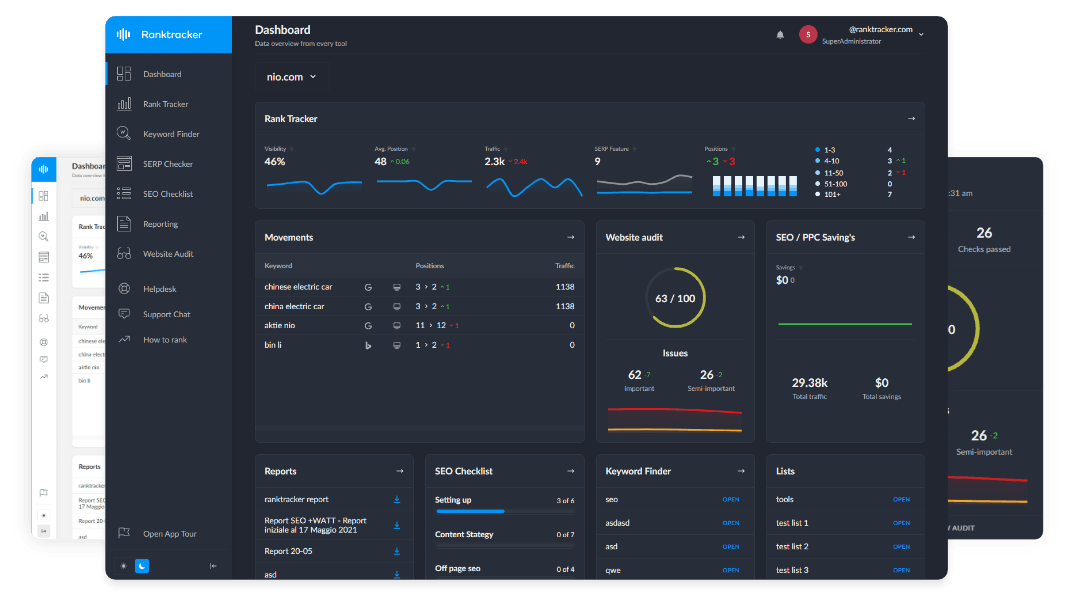

9. How Ranktracker Supports On-Device AI Discovery

Ranktracker tools align perfectly with on-device LLM trends:

Keyword Finder

Uncovers goal-based, conversational, and multi-intent queries —the kinds local LLMs will rewrite most often.

SERP Checker

Shows entity competition and structured results that local LLMs will use as sources.

Web Audit

Ensures machine readability for:

✔ schema

✔ internal linking

✔ structured sections

✔ accessibility

✔ metadata

Critical for local LLM parsing.

AI Article Writer

Produces LLM-friendly content structure ideal for:

✔ local summarization

✔ cloud retrieval

✔ agentic reasoning

✔ multi-modal alignment

Backlink Monitor + Checker

Authority remains critical — local models still prefer trusted brands with strong external validation.

Final Thought:

On-Device LLMs Will Become the New Gatekeepers of Discovery — And They Will Control What Users See Before the Cloud Does.

Search no longer begins at Google. It begins on the device:

✔ personalized

✔ private

✔ contextual

✔ multimodal

✔ filtered

✔ agent-driven

And only then flows outward.

This means:

✔ SEO must adapt to local rewriting

✔ brands must strengthen machine identity

✔ content must be built for summarization

✔ trust signals must be explicit

✔ entity clarity must be perfect

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

✔ multi-modal interpretation is mandatory

The future of discovery is:

local first → cloud second → user last.

Marketers who understand on-device LLMs will dominate the next era of AI search — because they will optimize for the first layer of intelligence that interprets every query.