Intro

When web scraping on any substantial scale, the utilization of proxies is an absolute requirement, as many of the most famous websites block access to certain IP addresses, web scraping without Backconnect, rotating, or residential proxies can be problematic.

Using residential proxies, Backconnect proxies, rotating proxies, or other IP rotation strategies will help developers scrape popular sites without getting their scrapers restricted or shut down. A random IP address is frequently blocked from visiting major consumer internet sites in data centers, making this a problem when operating scrapers.

![]()

Smartproxy is the leading choice for businesses, researchers, and developers seeking top-tier web scraping proxies.

Fast Speed: Gain a competitive edge with Smartproxy's high-speed proxies. Enjoy uninterrupted access to data-rich websites, ensuring your projects run seamlessly"

Global Reach: Smartproxy boasts an extensive pool of residential and data center proxies in over 195+ locations worldwide. Access localized content effortlessly and expand your global reach.

Easy Integration: Their user-friendly dashboard and comprehensive documentation make proxy setup a breeze. Integrate Smartproxy into your workflow quickly, whether you're a seasoned pro or a novice.

Reliable Support: Rest easy knowing their dedicated support team is available around the clock to assist you with any questions or issues that may arise.

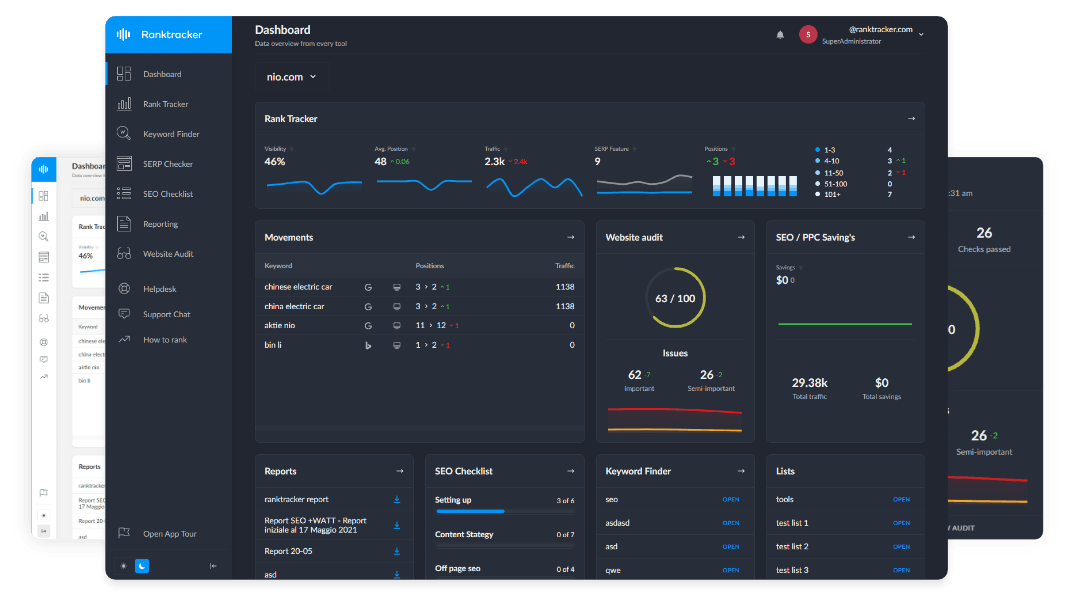

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

What are Proxies?

(Image source: Unsplash)

(Image source: Unsplash)

Using a proxy server, you can route your request through the servers of a third party and obtain their IP address in the process. You can scrape the web anonymously by utilizing a proxy, which masks your real IP address behind a faux proxy server's address. By using static ISP proxies, you can route your requests through third-party servers, obtaining a consistent IP address in the process. This allows you to scrape the web anonymously, effectively masking your real IP behind the proxy's stable IP address for enhanced security and privacy.

A scraping proxy service gets used for managing proxies for scraping projects. A simple proxy service like infatica.io or anyip.io for scraping could consist of a group of proxies used in parallel to simulate the appearance of multiple people simultaneously accessing the site. Proxy services are essential to large scraping efforts for neutralizing antibot defenses and accelerating parallel request processing. Moreover, scrapers can boost speed with a proxy pool that lets them use unlimited parallel connections if you hire dedicated software developers.

How to use a Proxy Rotator

A proxy rotator is either something you have created from scratch or a component of a service you have purchased. Its usage will differ, and you must reference your chosen solution's manual for detailed instructions.

Generally, a client typically receives one entry node with the required number of static proxies. The rotator selects a random IP address and rotates it with each request delivered to the destination. Thus, datacenter proxies imitate the behavior of organic traffic and do not get stopped as quickly.

How to Use a Proxy with Web Scraping Software

Using a proxy list with your current web scraping software is a relatively simple process. There are only two components to proxy integration:

1. Pass the Requests of Your Web Scraper Through a Proxy

This first stage is typically straightforward; however, it depends on which library your web scraping program uses. A basic example would be:

import requests

proxies = {'http': 'https://_user:pass_@_IP:PortNumber/_'}

requests.get('https://example.com', proxies=proxies)

The proxy connection URL will require you to gather your information italicized in the example. Your proxy service provider should offer you the values you need for connecting to your rented servers.

After you have constructed the URL, you need to reference the documentation that comes packaged with your network request library. In this documentation, you should find a method for passing proxy information through the network.

It is good to submit some test queries to a website and then examine the response you get back if you are unsure whether or not you have completed the integration successfully. These websites return the IP address that they observe the request originating from; hence, you should see the information about the proxy server rather than the information related to your computer in the answer. This separation occurs because the proxy server is a middleman between your computer and the website. Moreover, you can see the full list of datacenter proxy providers here to ensure you choose the right one and improve your proxy setup and performance.

2. Changing the IP Address of the Proxy Server Between Requests

Consider several variables in the second stage, such as how many parallel processes you are running and how close your goal is to the target site's rate limit.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

You can store a basic proxy list in memory and remove a specific proxy at the list’s end after each request, inserting it to the front of the list once it has been. This works if you are using one worker, process, or thread to make sequential requests one after the other.

Aside from the simple code, it assures even rotation over all of your accessible IP addresses. This is preferable to "randomly" selecting a proxy from the list during each request because it can result in the same proxy being selected consecutively. As an alternative for e-commerce scraping, you can use dedicated APIs or data extraction tools that provide structured product and pricing data without the need for rotating proxies.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Suppose you are running a web scraper in a multi-worker environment. In that case, you will need to track the IP addresses of all the workers to ensure that multiple workers are using no one IP in a short period, which could result in that IP being "burned" by the target site and no longer being able to pass through requests.

When a proxy IP gets burned, the destination site will likely provide an error response informing you that your connection has slowed. After a few hours, you can start utilizing the proxy again if the target site is no longer rate-restricting requests from that IP address. If this occurs, you can set the proxy to "time out."

The Importance of IP Rotation

Antibot systems will typically identify automation when they observe many requests coming from the same IP address in a very short amount of time. This method is one of the most common. If you utilize a web scraping IP rotation service, your queries will rotate across several different addresses, making it more difficult to determine the location of the requests.

Conclusion

An increasing number of businesses are using proxies to gain a competitive edge.

Web scraping is useful for your company since it enables you to track the latest trends in the industry, which is important information to have. After that, you can use the information to optimize your pricing, advertisements, setting your target audience, and many other aspects of your business.

Proxy servers can assist you if you want your data scraper to collect information from many places or if you do not want to risk being detected as a bot and having your scraping privileges revoked.